Is the universe fine-tuned? In other words, were physical laws and constants of nature somehow chosen to allow complex life to arise?

In the past sixty-five years, science has gradually revealed a shocking lesson: by every right, you shouldn’t be alive — none of us should.

It was only too easy for something to have gone wrong. For instance, had gravity been a little stronger, or had carbon been slightly different, or had neutrinos not existed — in all cases the result is disaster:

A dead universe — devoid of chemistry, life, and consciousness.

Yet somehow, through incredible luck, divine intervention, or otherwise, we dodged every bullet to enjoy a universe with life.

Against all odds, the universe is a place where life is possible. To what can we ascribe this great fortune? How can it be explained?

What really interests me is whether God could have created the world any differently; in other words, whether the requirement of logical simplicity admits a margin of freedom.

Albert Einstein

Why is the universe the way it is? Could it have been any other way?

Contents

First Clues

In the past century, cosmologists and particle physicists developed a nearly complete understanding of our world and cosmos.

Our current understanding now covers a range from the astronomical scales of superclusters down to the subatomic scales of quarks.

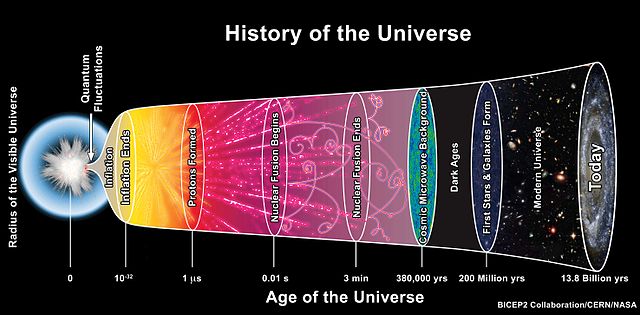

Between the 1960s and 1980s, cosmologists developed a model of the history and initial conditions of the universe, known as the Lamda-CDM model, which is also known as the standard model of cosmology.

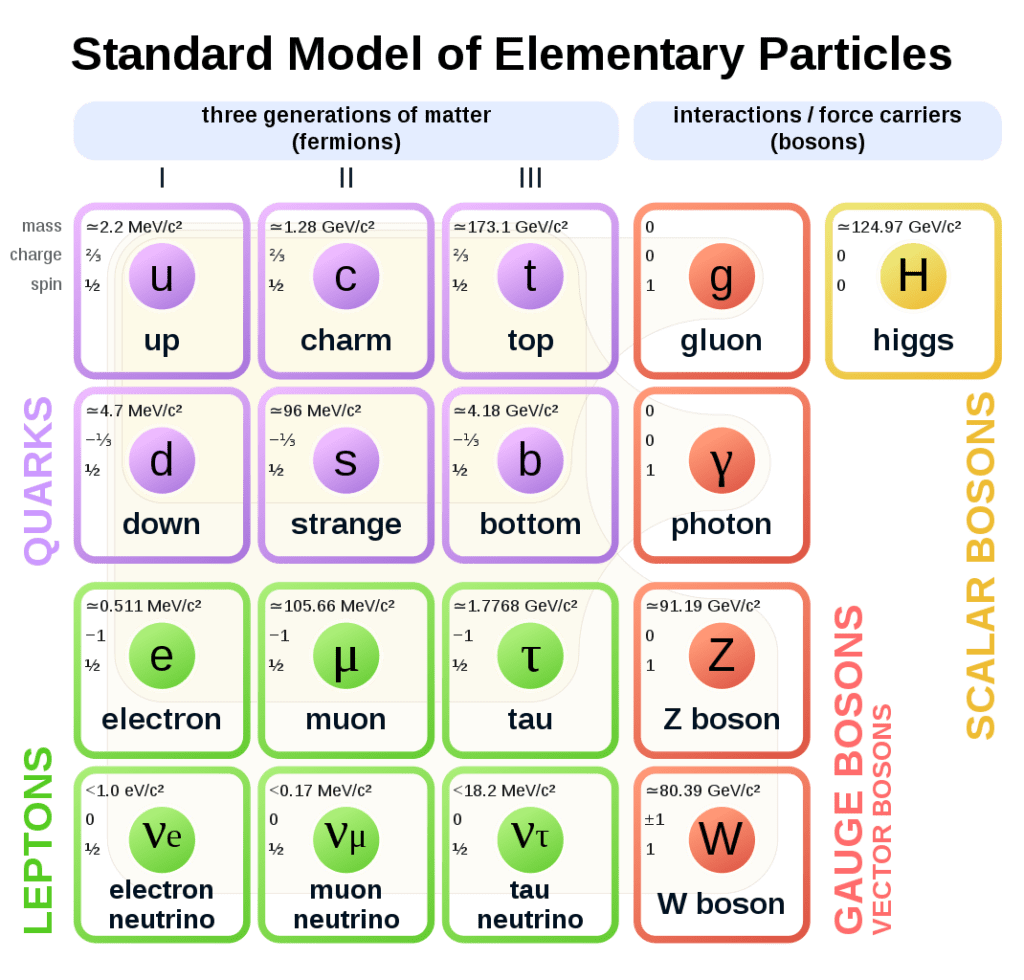

Likewise, between the 1950s and 1970s, particle physicists developed a model that describes fundamental forces of nature, and all known elementary particles. It is called the standard model of particle physics.

Aside from describing known particles, this model predicted particles that had never been seen. For instance, it predicted the Higgs boson.

But these new theories also disturbed physicists and cosmologists.

The more they came to understand the particulars of our universe: the properties of particles, the strengths of the forces, the initial conditions of the big bang — the more they realized we shouldn’t even be here.

As we look out into the universe and identify the many accidents of physics and astronomy that have worked together to our benefit, it almost seems as if the universe must in some sense have known we were coming.

Freeman Dyson in “Energy in the Universe” (1971)

A Perfect Balance

In the 1960s, cosmology transitioned from a loose set of unconfirmed speculations into a hard science backed by observation. In this emerging field, cosmologists found a coincidence that couldn’t be brushed off as mere luck — it demanded explanation.

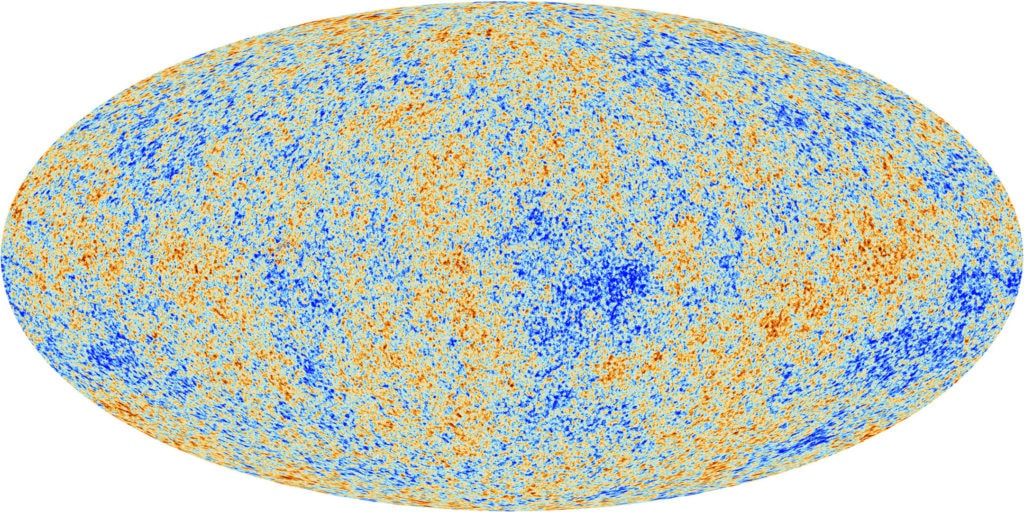

In 1948, Ralph Alpher and Robert Herman were the first to predict that if the big bang happened, space should be filled with a uniform radiation emanating from all directions in the sky — a primordial heat remaining from the earliest moments of the universe.

At the time, there was no technology to detect this radiation. Alpher’s and Herman’s prediction soon fell into obscurity and was forgotten.

Sixteen years later, the chairman of physics at Princeton, Robert Dicke, rediscovered this prediction. With his colleagues Jim Peebles and David Wilkinson, they planned to build a device able to detect this radiation. They could thereby confirm or disprove the big bang.

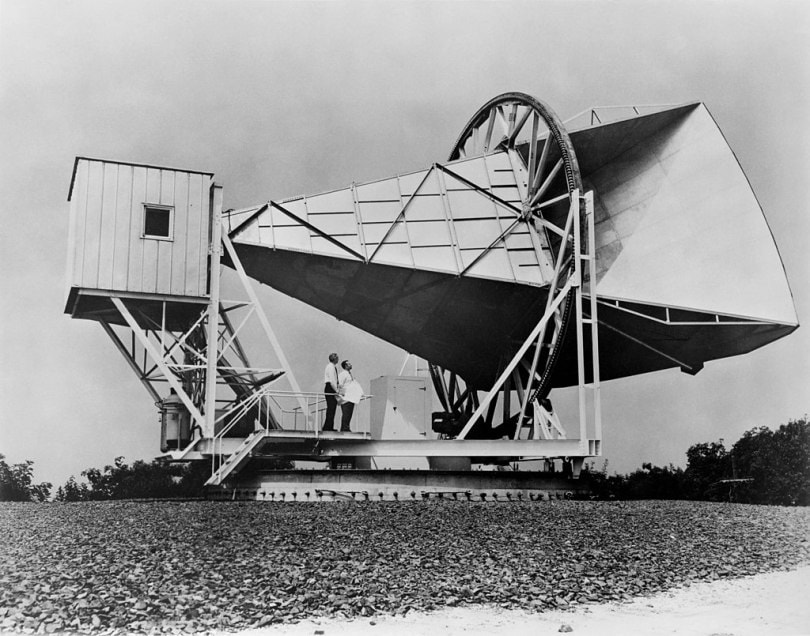

But as fate had it, they were beaten to the punch by two radio astronomers: Arno Penzias and Robert Wilson of Bell Labs.

In 1964, Penzias and Wilson spent a frustrating year trying to isolate the source of a signal interfering with their observations, to no avail. A mutual friend suggested that they reach out to Robert Dicke, who was a short drive away from their facility in Holmdel, New Jersey.

Penzias and Wilson called Dicke who was in his office with Peebles and Wilkinson. After hearing the details of this interference signal from the Bell Labs researchers, Dicke turned to his colleagues and said, “Well boys, we’ve been scooped.”

The Princeton team drove to the Bell Labs facility to hear the signal for themselves. The signal had all the right characteristics. It’s temperature, distribution, consistency, directionality, and intensity — all matched perfectly with predictions of the big bang theory.

They listened to a cosmic hum of radiation that had been traveling through space for billions of years — the universe had a beginning.

It was one of the greatest scientific discoveries of the 20th century. It earned Penzias and Wilson the 1978 Nobel Prize in physics.

But this wasn’t the end of the story.

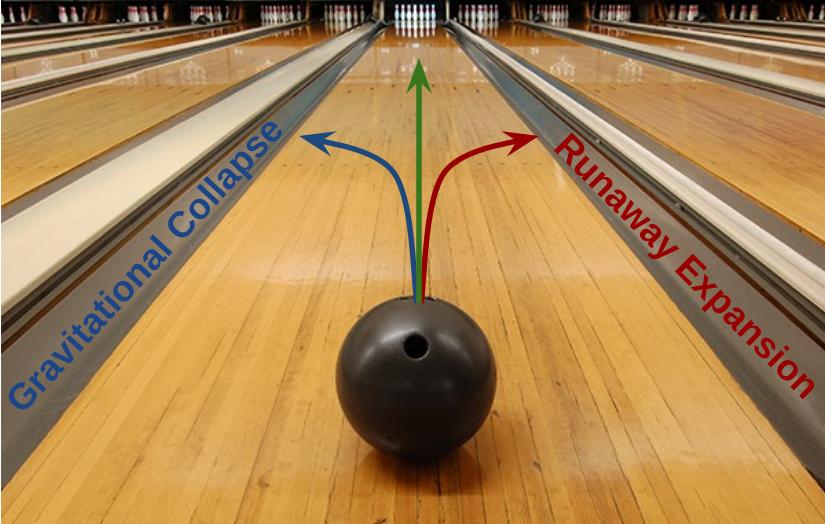

In 1969, Dicke recognized an unsettling consequence of the big bang and the expansion of the universe: it is highly unstable.

Had the density of the universe been slightly greater, the universe would have collapsed billions of years ago, long before life formed. Had the density been slightly less, the universe would have expanded too fast for galaxies and stars to form.

It was as though the universe sat on a knife edge. Had it not been so balanced, it would have fallen to either side and we wouldn’t be here.

Modern observations confirm space is flat to within the precision of our measurement abilities (within 1%). Due to the unstable nature of the balance, it implies that earlier, the balance was even greater.

Calculations reveal that if the curvature is below 1% today, then one second after the big bang, it would have been 10^{15} times less.

The situation is analogous to rolling a bowling ball down a lane so long that the ball could roll for billions of years. If we find the ball drifted less than 1% from center after rolling for billions of years, it suggests the ball must have been even closer to center when it started.

If the rate of expansion one second after the Big Bang had been smaller by even one part in a hundred thousand million million, it would have recollapsed before it reached its present size. On the other hand, if it had been greater by a part in a million, the universe would have expanded too rapidly for stars and planets to form.

Stephen Hawking in “A Brief History of Time” (1996 edition)

It appears as though we are the winners of a cosmic lottery. Had there been just slightly more mass, then the universe would have collapsed billions of years ago. Had there been slightly less, there would be no galaxies, stars, planets, or humans — there’d be no time for any structures to condense out of a rapidly expanding interstellar gas.

Our universe is a ‘Goldilocks’ universe where the density is just right.

Monkeying with Physics

As long as human beings have looked up at the night’s sky, we’ve wondered what the stars were and what makes them shine.

But despite wondering for hundreds of thousands of years, only in the last 80 have we come to understand how and why the stars shine.

In 1920, Arthur Eddington speculated that fusion of hydrogen into helium powered the stars. But it wasn’t until 1939 that Hans Bethe did the math to prove it, earning Bethe the 1967 Nobel Prize in physics.

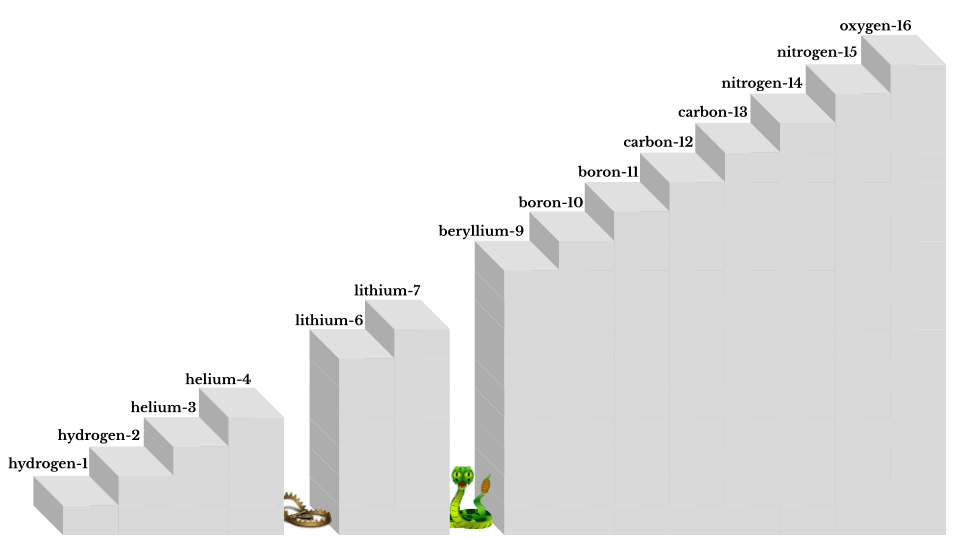

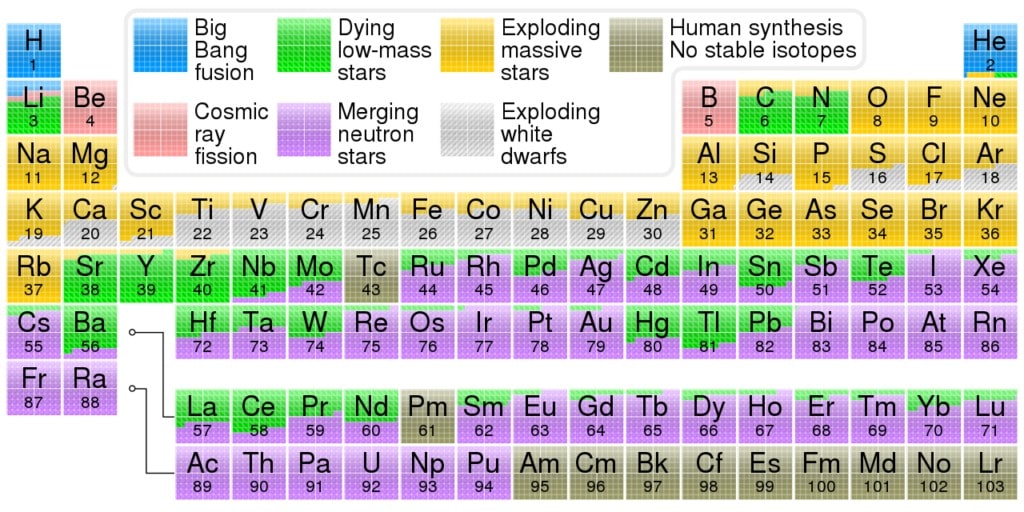

The only chemical elements produced in the big bang were hydrogen and helium along with trace amounts of lithium and beryllium.

It was believed that stars could account for the production of the 88 other naturally occurring elements. The elements we know and love, which form our bodies and are necessary for our existence.

These include carbon, nitrogen, oxygen, sodium, iron, and so on.

But there was a problem. In 1939, it was discovered that there is no stable element with an atomic mass of 5. This is known as the mass-5 roadblock. Like a staircase missing a step, it prevented heavier elements from being built up adding one hydrogen nucleus at a time.

Instead, the process would halt at helium-4 (a helium atom with two protons and two neutrons). Perhaps two helium atoms could fuse to make beryllium-8, and thereby “jump” over the missing fifth step.

But this didn’t work either.

In 1932, John Cockcroft and Ernest Walton found that beryllium-8 is unstable. It lasts for less than a thousandth of a trillionth of a second. Their work won them the 1951 Nobel Prize in physics for transmuting the elements — realizing the long-held dream of alchemists.

So yet another step was missing. With no known mechanism to get over the hurdle of the mass-5 and mass-8 roadblocks, there was no explanation for how elements necessary to life came to be.

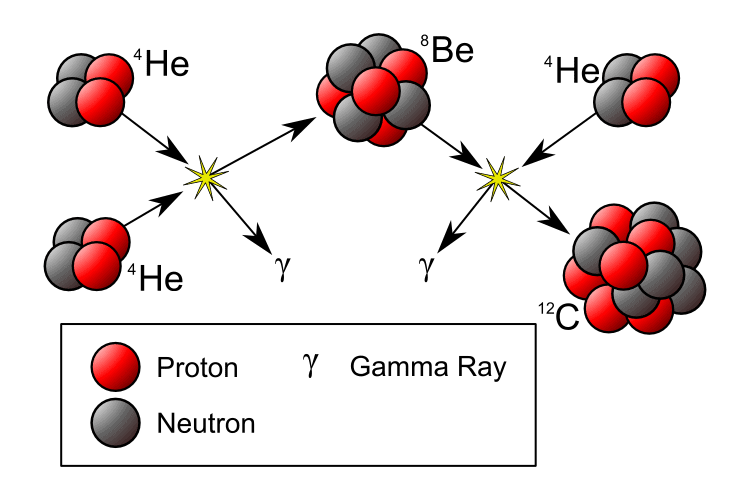

This problem led the cosmologist Fred Hoyle, in 1953, to make what’s described as “the most outrageous prediction” ever made in science.

Hoyle’s outrageous prediction was the existence of a yet undiscovered excited energy state of the carbon-12 nucleus, which had somehow been missed by all the particle physicists in the world.

If this state existed, it would allow the triple-alpha process — the simultaneous collision of three helium-4 nuclei to yield carbon-12.

If carbon could be made this way, the mass-5 and mass-8 roadblocks could be cleared, and then other heavier elements could be built one hydrogen or helium nucleus at a time. Without this state, carbon would be many millions of times rarer, and we wouldn’t be here.

So, Hoyle reasoned, this state of carbon must exist.

In 1953, Hoyle traveled from Cambridge, England to visit William Fowler’s nuclear physics lab at Caltech. Hoyle asked that Fowler’s lab do the experiments to check for this state of the carbon-12 nucleus, which he predicted should be at an energy level of 7.68 million eV.

I was very skeptical that this steady state cosmologist, this theorist, should ask questions about the carbon-12 nucleus. […] Hoyle just insisted — remember, we didn’t know him all that well — here was this funny little man who thought that we should stop all this important work that we were doing otherwise and look for this state, and we kind of gave him the brush off.

William Fowler in interview (1973)

But Hoyle succeeded in convincing a junior physicist at the lab, Ward Whaling, to check for it. Five months later, Hoyle received word.

Whaling confirmed the existence of the excited state of carbon-12, and it was almost exactly where Hoyle predicted: at 7.655 million eV!

Hoyle’s prediction is remarkable because he used astrophysics (the physics of stars) to find unknown properties in nuclear physics (the physics of atoms and their nuclei). Fowler was an instant convert.

So it was really quite a tour de force, that a man who walked into the lab predicted the existence of an excited state of a nucleus, and when the appropriate experiment was performed it was found. And no nuclear theorist starting from basic nuclear theory could do that then, nor can they really do it now. So Hoyle’s prediction was a very striking one.

William Fowler in interview (1973)

Fowler took a year off from his post at Caltech to work with Hoyle in Cambridge. Together with two astronomers, Margaret and Geoffrey Burbidge they worked out a complete theory of element formation, showing how every element is produced and explaining the relative abundances of the elements as found in nature.

Their work was revolutionary and it made a name for the authors.

For this work, Fowler received the Nobel Prize in physics in 1983. Hoyle, however, did not share in the prize, creating controversy.

In any event, no one denied the significance of their accomplishment.

With their 1957 paper, humanity finally had an understanding of where all the matter, which makes up our world, our food, our shelter, our very bodies, came from — the innermost depths of long-dead stars.

We are literally the ashes of long dead stars. If you’re less romantic, we are the nuclear waste from the fuel that made those stars shine.

Sir Martin Rees in “What We Still Don’t Know: Why Are We Here” (2004)

And so, the world as we know it is owed to the carbon-12 nucleus having this chance property. Like the delicate balance of the density of the universe, the existence of this state hangs in a delicate balance.

As it happens, the energy level of this state is at 7.655 MeV. Had the energy level of this state been less than 7.596 MeV or greater than 7.716 MeV, there would be almost no carbon in the universe.

The minor miracle of the carbon-12 nucleus having this excited state and it being in exactly the right range did not go unnoticed.

Some super-calculating intellect must have designed the properties of the carbon atom, otherwise the chance of my finding such an atom through the blind forces of nature would be utterly minuscule.

Fred Hoyle in “The Universe: Past and Present Reflections” (1982)

[…]

A common sense interpretation of the facts suggests that a superintellect has monkeyed with physics, as well as with chemistry and biology, and that there are no blind forces worth speaking about in nature. The numbers one calculates from the facts seem to me so overwhelming as to put this conclusion almost beyond question.

The balancing of the universe’s density, and the fortune of the carbon-12 excited state were just the first of many “cosmic coincidences.”

The more scientists probed the inner workings of the universe, the more lucky coincidences they found. With each one, evidence gathered to support the idea that the laws of physics are finely-tuned to permit the emergence of complexity, and with that complexity, life.

Cosmic Coincidences

The expansion rate of the universe, and the existence of the excited state for the carbon-12 nucleus are due to fundamental physical forces.

For instance, the expansion rate of the universe is governed by the strength of gravity and the energy of the vacuum. Likewise, the excited state of carbon-12 is determined by electromagnetic and nuclear forces.

So far, particle physicists have identified 25 dimensionless constants in the standard model, while cosmologists have identified five others.

A universe where these fundamental constants have different values is just as mathematically and logically consistent as our own. They represent other universes among the set of possible universes.

Accordingly, these constants are considered free parameters.

Of the 30 known fundamental physical constants, very few of them can change to any significant degree without leading to a barren universe. For instance, universes of only hydrogen, universes with no aggregations of matter, or universes of only black holes.

Let’s review the fragility of our universe to tweaks to these constants.

Chemistry and Life

Our universe has a rich chemistry. The 92 naturally occurring chemical elements can combine in a nearly unlimited number of ways.

Atoms assemble into molecules that encode digital information and can even self-arrange into structures that reproduce themselves.

Three minutes after the big bang, the only elements in the universe were hydrogen and helium. The universe remained this way for hundreds of millions of years — a thin haze of light gas.

It’s doubtful that any life could arise in a universe with only these elements. Helium is chemically inert and by itself, hydrogen can only make dihydrogen. Without chemistry, the universe would be lifeless.

Fortunately, the fusion in stars gave us 90 other elements, and with them, new ways to combine, react, and generate complexity.

Of the 92 stable chemical elements, 60 can be found in your body. Of those, around 30 are believed to play a biological role in humans.

The King of the Elements

Some elements are more important than others when it comes to supporting life. Of those, carbon is the most special. For its unique role in chemistry, carbon’s been called the king of the elements.

Carbon is the only element that can link up 4 other atoms, and also form unlimited chains made of links with itself. Carbon is therefore the glue that binds large and complex molecules together.

Nearly every molecule with more than 5 atoms contains carbon.

This includes essentially all biomolecules: carbohydrates, fats, proteins, RNA, DNA, amino acids, cell walls, hormones, and neurotransmitters.

Without carbon, there could be no life as we know it.

There could be no life based on molecules, since complex molecules are impossible without the glue of carbon to hold them together.

That carbon exists at all is due to the miracle of the Hoyle state, which depends on properties of nuclear and electromagnetic forces.

A Desert Universe

After hydrogen and helium, oxygen is the most abundant element in the universe. By mass, it makes up 89% of the oceans, and 50% of Earth’s crust. It even makes up the majority of your weight.

For every 100 pounds someone weighs, 65 pounds are oxygen.

Oxygen is important to life for its reactivity. It reacts with every element except for fluorine and the noble gasses. Without oxygen, there could be no H2O — and the whole universe would be a desert.

We owe our existence not only to the presence of oxygen in the universe, but to the availability of oxygen outside the cores of stars.

All our oxygen came from the cores of massive stars. When these stars exhausted their fuel, their cores collapsed under their own weight.

These cores are roughly the size of our moon, but have the mass of our sun. Accordingly, their gravitational field is 200 billion times stronger than Earth gravity. It’s so strong that infalling matter reaches a quarter of the speed of light by the time it hits the center.

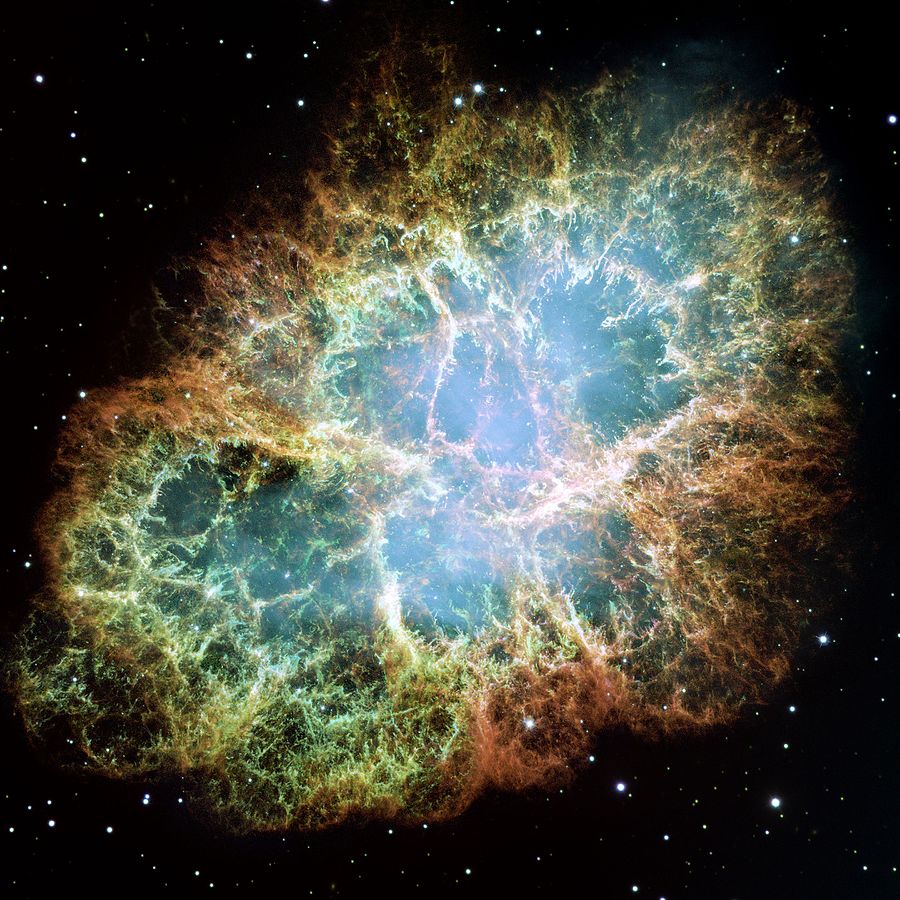

In 10 seconds, 10% of the star’s mass is converted into energy. The result is a type II supernova, also known as a core collapse supernova. The star’s outer layers falling in would create a black hole and lock away all oxygen forever — except this doesn’t happen.

In the last moments of collapse, interactions by the weak nuclear force trigger a bounce that blows away the outer layers of the star.

Had the weak force been stronger, this bounce would happen too early. If it had been weaker, then the bounce would happen too late. In both cases, the result is a disaster. The entire star, along with all of its oxygen would fall into a black hole and never be seen again.

Without oxygen, chemistry is crippled. There would be no water, acids, bases, sugars, carbohydrates, fats, proteins, alcohols, nor any respiration, combustion, or photosynthesis as we know them.

Our lives are indebted to yet another precise balance. This time, it is a balance of the seemingly inconsequential weak nuclear force.

Every breath you take contains atoms forged in the blistering furnaces deep inside stars. Every flower you pick contains atoms blasted into space by stellar explosions that blazed brighter than a billion suns.

Marcus Chown in “The Magic Furnace: The Search for the Origins of Atoms” (2001)

Particle Physics and Life

The chemical properties of elements are set by properties of smaller parts, called particles. These include protons, neutrons, and electrons.

The nucleus of the atom is made of nucleons (protons and neutrons), while the outer shell of the atom is composed of electrons. Nucleons are themselves made of more fundamental particles called quarks.

Nearly every particle in the standard model of particle physics has to exist, or else we would not be here. Moreover, in most cases, the properties of each particle, such as its mass, had to be just so.

A World of Electrons

Electrons, being so small and light may seem remote and abstract, but the world we know is primarily the world of electrons.

The light we see is emitted by electrons. Sounds we hear are carried by electrons bouncing off each other. Tastes and smells we experience are caused by chemical reactions driven by electrons. Every time we touch something we feel the repulsion of that thing’s electrons.

Every chemical reaction is activity between electrons. Accordingly, the properties of elements, the compounds they can form, their level of reactivity, all of it, is determined by the properties of electrons.

If the mass or charge of electrons had different values, all of chemistry would change. For example, if electrons were heavier, atoms would be smaller and bonds would require more energy. If electrons were too heavy there would be no chemical bonding at all.

If electrons were much lighter, bonds would be too weak to form stable molecules like proteins and DNA. Visible and infrared light would become ionizing radiation. They would be as harmful as X-rays and UV are to us now. Our own body heat would damage our DNA.

Luckily for us, electrons weigh just enough to yield a stable, but not sterile chemistry.

The laws of science, as we know them at present, contain many fundamental numbers, like the size of the electric charge of the electron and the ratio of the masses of the proton and the electron. […]

Stephen Hawking in “A Brief History of Time” (1988)

The remarkable fact is that the values of these numbers seem to have been very finely adjusted to make possible the development of life. For example, if the electric charge of the electron had been only slightly different, stars either would have been unable to burn hydrogen and helium, or else they would not have exploded.

A Starless Universe

Electrons are very light compared to the protons and neutrons:

- The proton’s mass mp is 1,836.15 times the mass of an electron me

- The neutron’s mass mn is 1,838.68 times the mass of an electron me

In a ton of coal, the electrons contribute little more than half a pound.

We’ve seen how electron weight is of critical importance to chemistry. But so too are masses of other particles. It was important that:

- Protons and neutrons be close in weight: mp ≈ mn

- Yet differ in mass by more than one electron: |mp − mn| > me

- And also that neutrons be heavier than protons: mn > mp

As it happens, all three of these conditions hold true. Had any of them not been met we end up with a universe devoid of life.

A free neutron is a neutron not part of an atomic nucleus. Free neutrons are unstable. They have a mean lifetime of ~15 minutes. Left alone, a free neutron will decay into a proton and electron.

Such decay is possible because neutrons weigh more than a proton and electron put together. Had instead, protons weighed more, then neutrons would be stable and protons would be unstable. A free proton would then be able to decay into a neutron and a positron.

But most of the hydrogen in the universe has a nucleus that is nothing but a free proton. If protons were unstable then hydrogen is unstable. Little hydrogen would survive to today. There would be no stars as we know them — only neutron stars and black holes.

It was also necessary that neutrons be unstable. Had protons and neutrons weighed the same, or been within one electron’s weight, then both nucleons would be stable. There would have been equal numbers of protons and neutrons in the first minutes following the big bang.

Again the result is disaster.

With equal numbers, each proton could pair with a neutron to form hydrogen-2. Hydrogen-2 rapidly reacts to form helium-4. There would be no more hydrogen of any kind in the universe: no fuel to power stars like our sun, no water, no organic chemistry, no life.

A Universe without Atoms

Photons are particles of light and carriers of the electromagnetic force.

Photons are the reason: the sun warms you, like magnets repel, electrons bind to nuclei to make atoms, and why your eyes can see.

Of all known particles, only two are massless. One is the gluon. The other is the photon. It was necessary for life in the universe that photons be massless. Had they not been, there would be no atoms.

Virtual photons carry the electrostatic and magnetic forces. Because photons are massless, virtual photons can act over any distance.

If on the other hand, photons had mass, then virtual photons could only act over short ranges, on the order of the size of a nucleus.

There would then be no attraction or repulsion by electrons and nothing to bind them to atoms. There would be no chemistry, only plasma.

Ghosts to the Rescue

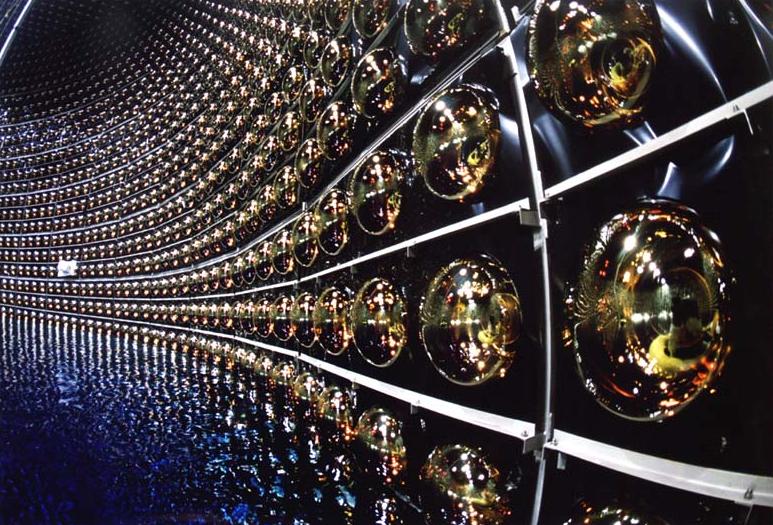

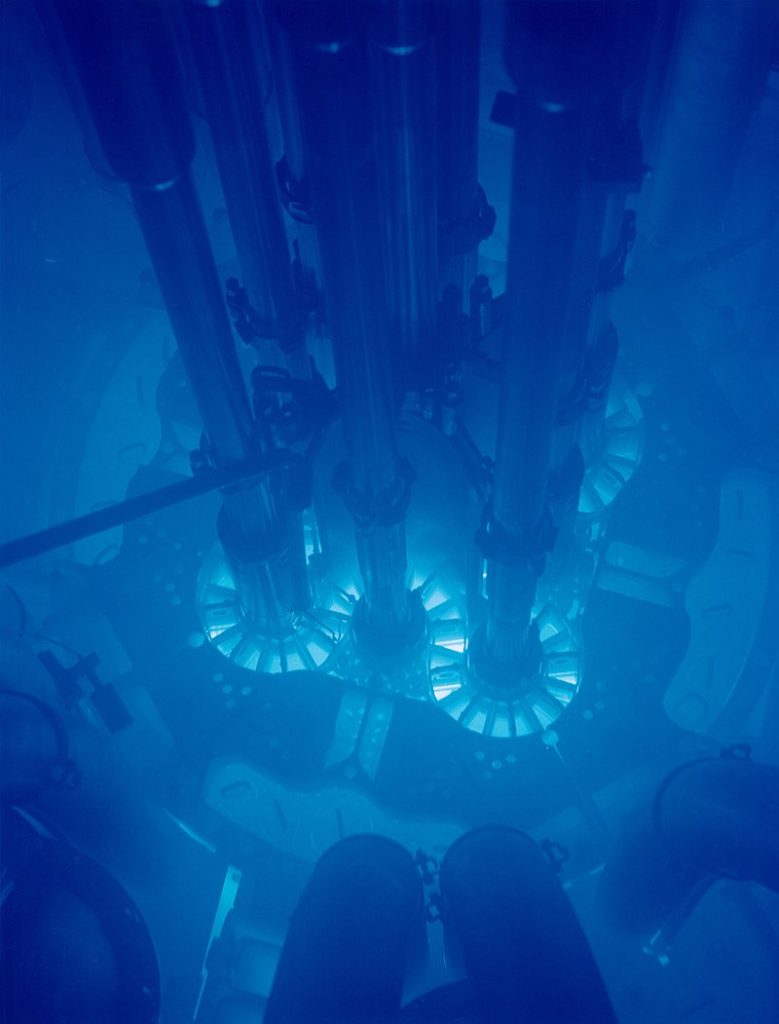

Buried a kilometer under a mountain in Japan, thirteen thousand electronic eyes sit vigil over a tank of ultra pure water.

Each eye is 20 inches wide and sensitive enough to detect single photons. They wait, in total darkness, shielded from radiation by the mountain, submerged in 20 Olympic-sized swimming pools of water.

The eyes wait patiently for a flash of light to appear in the water. If they’re lucky this happens maybe once every 100 minutes. But what could cause a flash of light out of total darkness? — ghost particles.

The flashes are due to a tiny particle known as the neutrino. Neutrinos are so small and light, it takes half a million of them to equal the weight of one electron. Further, they have no electric charge and so can pass straight through normal matter, even whole mountains.

A wall of lead one light-year thick would only block half of them.

Neutrinos are really pretty strange particles when you get down to it. They’re almost nothing at all, because they have almost no mass and no electric charge. They’re just little wisps of almost nothing.

John Conway in PBS interview (2011)

The infrequency of neutrino detection at the Kamioka Observatory is not because neutrinos are rare. Neutrinos are everywhere. Each second, 100 billion neutrinos pass through the tip of your thumb.

On Earth, most neutrinos come from the core of the sun. About 2% of the Sun’s energy is radiated away as neutrinos. To neutrinos, the Earth is transparent. Day or night, they pass through us, unnoticed.

This has earned neutrinos the nickname of ghost particles.

This is why neutrinos are so hard to detect. Only once every few hours, is a neutrino stopped by the massive water tank in Kamioka.

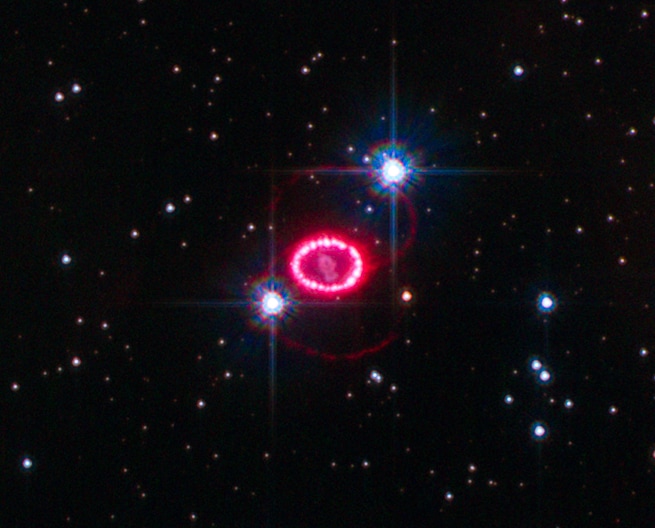

But on February 24th, 1987, something strange happened. It had not happened before nor has it happened since: in the span of 10 seconds, the Kamioka observatory registered 12 separate flashes.

It was not a problem with the equipment. At the same time, and on the other side of the world, the IMB detector in Ohio also saw a flurry of flashes in their neutrino detector during those same 10 seconds.

Something, somewhere, released an incredible burst of neutrinos.

As it turned out, the source of this neutrino burst was something far away and long ago — the death of a star beyond our galaxy.

Image Credit: ESA / NASA / Hubble

These neutrino labs were the first to detect the explosion. Astronomers wouldn’t notice the event until several hours later. Today, a network of neutrino labs now form our supernova early warning system.

In 2002, Masatoshi Koshiba, who directed the neutrino experiments, and Raymond Davis Jr., who built the first neutrino detector, shared the Nobel Prize in Physics for the detection of these neutrinos.

Given their ghostly nature, it would be easy to write off neutrinos as playing no important role in the universe, and having no relevance to life. But we owe our existence to the tiny neutrino.

It is the neutrino that rescues oxygen and other vital elements from disappearing into the collapsing core of a dying star. During a core collapse, 100 times more energy is released in 10 seconds than our sun will emit in her 10 billion year life.

Around 99% of this energy is released as neutrinos.

Only a ghost particle could escape from the core and reach the outer layers of the collapsing star. There they deposit a little of their energy, giving the outer layers enough of a push to blow the star apart and save elements like oxygen from otherwise certain doom.

There would be no oxygen, no water, and likely, no life, if it weren’t for the neutrino and the role it plays during the deaths of these giants.

A very interesting question to me is: is the universe more complicated than it needs to be to have us here? In other words, is there anything in the universe which is just here to amuse physicists?

Max Tegmark in “What We Still Don’t Know: Why Are We Here” (2004)

It’s happened again and again that there was something which seemed like it was just a frivolity like that, where later we’ve realized that in fact, “No, if it weren’t for that little thing we wouldn’t be here.” I’m not convinced actually that we have anything in this universe which is completely unnecessary to life.

Fundamental Forces and Life

There are just four fundamental forces in nature:

- Electromagnetism

- Gravity

- The Weak Nuclear Force

- The Strong Nuclear Force

These forces drive all movement and every physical interaction. In each of them, scientists have noticed an inexplicable balancing act.

Electromagnetism and the “Greatest Damn Mystery in Physics”

The strength of the electromagnetic force is determined by a dimensionless constant called the fine-structure constant (\alpha).

\alpha = 0.00729351 \approx \frac{1}{137}Due to \alpha having a value of \frac{1}{137}, the speed of an electron in a hydrogen atom is \frac{1}{137} the speed of light. It also sets the fraction of electrons that emit light when they strike phosphorescent screens at \frac{1}{137}.

Determining such fundamental properties as the speed of electrons in atoms means \alpha determines how large atoms are, which in turn determines what molecules are even possible.

A different \alpha would change properties like the melting point of water and the stability of atomic nuclei.

Physicists calculated that had \alpha differed from its current value by just 4%, the carbon-12 excited energy level would not be in the right place. There would be almost no carbon in the universe if \alpha were \frac{1}{131} or \frac{1}{144}.

It has been a mystery ever since it was discovered more than fifty years ago, and all good theoretical physicists put this number up on their wall and worry about it. Immediately you would like to know where this number for a coupling comes from: is it related to pi or perhaps to the base of natural logarithms?

Richard Feynman in “QED: The Strange Theory of Light and Matter” (1985)

Nobody knows. It’s one of the greatest damn mysteries of physics: a magic number that comes to us with no understanding by man. You might say the “hand of God” wrote that number, and “we don’t know how He pushed his pencil.” We know what kind of a dance to do experimentally to measure this number very accurately, but we don’t know what kind of dance to do on the computer to make this number come out, without putting it in secretly!

Gravity and the Lives and Deaths of Stars

The strength of gravity is determined by a dimensionless constant called the gravitational coupling constant (\alpha_{G}).

\alpha_{G} = 5.907 \times 10^{-39} = \newline

0.000000000000000000000000000000000000005907It is striking how small \alpha_{G} is. The smallness of \alpha_{G} means gravity is exceptionally feeble compared to the other forces — so much weaker, it’s an unsolved mystery of physics (called the hierarchy problem).

If \alpha_{G} were larger, you and everything else in the universe would weigh more. Conversely, if \alpha_{G} were smaller, everything would weigh less.

But if gravity weren’t so weak, we wouldn’t be here.

In 1947, the physicist Pascual Jordan noticed a strange coincidence. He described it in his book “The Origin of the Stars.”

The coincidence he noticed was that the mass of the sun is suspiciously close to the weight of a proton divided by {\alpha_{G}}^{3/2}. In fact, the mass of nearly every star is within a factor of ten from this number.

There is a reason for this.

The electrostatic repulsion between two protons is about 10^{36} times stronger than their gravitational attraction. Accordingly, once you have around 10^{36 \times (3/2)} or 10^{54} protons in one place (as in a star), the gravitational attraction between the star and a proton begins to eclipse the strength of two proton’s repulsion, sparking fusion.

But should the mass of a compact object increase much past this level, the star becomes unstable and will either blow itself apart or collapse into a neutron star or black hole at the Chandrasekhar limit.

The reason stars are so big is because gravity is so weak.

If gravity were stronger, everything miniaturizes. Planets, mountains, animals — even the observable universe — need to shrink to not collapse under their weight. (See: “Why are things the size that they are?“)

A stronger gravity not only decreases a star’s size, but also its life expectancy. A smaller star leaks heat more quickly. If gravity were ten times stronger, an equivalently hot star would live just one tenth the time. So if \alpha_{G} had 37 zeros, rather than 38, after its decimal point, a star like our sun would live not ten billion years, but one billion.

Image Credit: NASA/JPL/Texas A&M/Cornell

Short-lived stars doom complex life. It took billions of years for multicellular life to appear on Earth. The chance for life to appear at all only diminishes as \alpha_{G} increases.

The cosmologist Martin Rees described the astrophysics of a hypothetical universe where \alpha_{G} is a million times stronger than it is:

Heat would leak more quickly from these ‘mini-stars’: in this hypothetical strong-gravity world, stellar lifetimes would be a million times shorter. Instead of living for ten billion years, a typical star would live for about 10,000 years. A mini-Sun would burn faster, and would have exhausted its energy before even the first steps in organic evolution had got underway.

Sir Martin Rees in “Just Six Numbers” (1999)

Life owes its existence to weak gravity. But we should also be grateful that \alpha_{G} isn’t zero or negative. This leads to disaster of another kind.

With zero or negative gravity, no galaxies, stars, or planets form. There would be no place for life to begin for there would be no places at all.

The Weak Nuclear Force and the Biggest Explosions

The weak nuclear force causes particle decay. The decay rate is set by a dimensionless constant called the weak force coupling constant (\alpha_w).

\alpha_w \approx 0.000001 = 10^{-6}Unstable particles, such as neutrons, muons, and pions, spontaneously convert (or decay) into other particles. For example, in beta decay a neutron decays into a proton, electron and neutrino.

A larger \alpha_w shortens the lives of unstable particles and accelerates radioactive decay. But altering \alpha_w has other consequences.

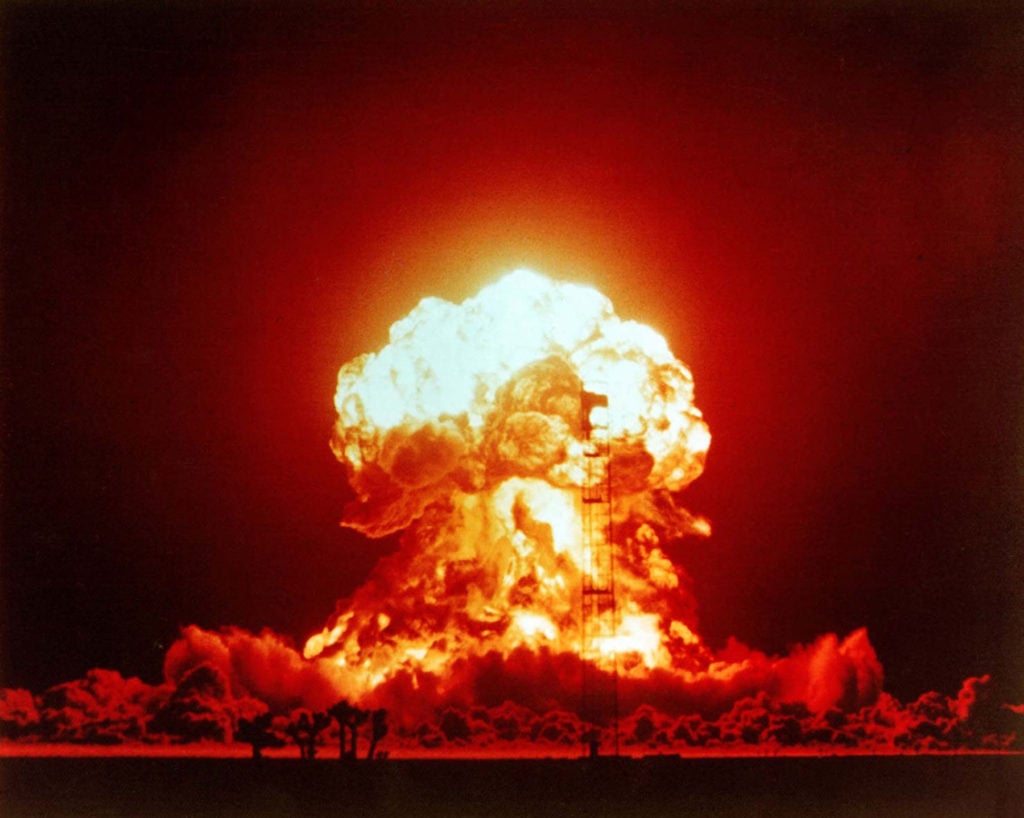

The value of \alpha_w causes the biggest explosions in the universe.

The biggest man-made explosion was the 50 megaton Tsar Bomba. It had a yield of 50 million tons of TNT. Its mushroom cloud rose to 42 miles, reaching the edge of space at five times the height of Everest.

Yet this explosion is pitiful next to the biggest explosions known.

The biggest explosions in the universe are core-collapse supernovae. They release the energy of 10^{28} Tsar bombs. If each sand grain equaled a billion Tsar bombs, and if every grain of sand on Earth’s beaches exploded at once, that would approach the power of a core-collapse supernova.

The neutrino luminosity of a core-collapse supernova briefly exceeds the light output of all the stars of [the observable] universe.

Craig Hogan in “Why the Universe is Just So” (1999)

As we’ve seen, these most-powerful explosions depend on the most ghostly of particles — the neutrino. But the explosions also depend on the neutrino having the right amount of ghostliness.

Ever so rarely, a neutrino interacts with a particle through the weak nuclear force. For instance, a neutrino might interact with an electron and give it enough energy to knock it away from its atom.

This electron, if travelling fast enough, creates a shock-wave of light — the optical equivalent of a sonic boom — known as the Cherenkov effect. The effect is named for Pavel Cherenkov, who first noticed it in 1934, earning him a share of the 1958 Nobel Prize in Physics.

This effect is responsible for the flashes of light neutrino detectors look for, and also the blue glow seen inside nuclear reactors.

Since neutrinos feel the weak nuclear force, the value of \alpha_w determines the ease at which neutrinos interact with regular matter — it sets the neutrino’s level of “ghostliness.”

Recent models show that had \alpha_w been less than half its current value, neutrinos would leave the collapsing core too quickly to forestall the collapse. Conversely, had \alpha_w been more than five times its current value, then neutrinos would be trapped in the core for too long. Again, they would be unable to prevent the collapse of the star.

Core-collapse supernovae are the source of all free oxygen in the universe. Every breath you take, and drop of water you sip contains oxygen from these stars, blown into space by neutrinos.

If \alpha_w had been a little bigger or smaller, elements necessary for life would stay forever trapped in the remnants of giant stars. Accordingly, life as we know it depends on \alpha_w being close to 0.000001.

The Strong Nuclear Force and Sticky Nucleons

The strong nuclear force is the glue that holds atomic nuclei together. The stickiness of this glue is determined by a dimensionless constant called the strong force coupling constant (\alpha_s).

\alpha_s \approx 1

Of the four fundamental forces, \alpha_{s} is the strongest. It is 137 times stronger than the electromagnetic force, and a million times stronger than the weak nuclear force. Unlike electromagnetism and gravity, the strong force is range-limited. It can only be felt at up to a few femtometers away.

A larger \alpha_{s} makes fusion easier and fission harder. With a smaller \alpha_{s}, the reverse happens. We are lucky \alpha_{s} is much larger than \alpha. If it weren’t, the strong force wouldn’t be able to overcome the electrostatic repulsion of protons, and nuclei of atoms wouldn’t stay together at all.

In such a universe, the only possible atom is hydrogen. Accordingly, if the strong force weren’t so strong, we wouldn’t be here.

The reason there are ~100 chemical elements is due to the fact that the strong force is ~100 times stronger than the electromagnetic force.

But it is a delicate balance. The strong force must not be too strong.

Had \alpha_{s} been 3.7% stronger, fusion would be too easy. All hydrogen would have fused into helium in the first minutes after the big bang. There would be no water, no organic compounds, nor fuel for stars like our sun.

Yet, if \alpha_{s} were 11% weaker, hydrogen-2 wouldn’t be stable. Hydrogen-2 plays a necessary role in fusion of stars like our sun. So with a slightly weaker strong force, again our sun doesn’t shine.

A more recent analysis has placed even tighter constraints on \alpha_{s}, given the details of how carbon and oxygen are produced in the cores of stars:

Even with a change of 0.4% in the strength of [the nucleon-nucleon] force, carbon-based life appears to be impossible, since all the stars then would produce either almost solely carbon or oxygen, but could not produce both elements.

Luke A. Barnes in “The Fine-Tuning of the Universe for Intelligent Life” (2011)

Why does \alpha_{s} have the value it does? No one can say. All we know is that if it didn’t, there would be no one here to speculate about it.

Cosmology and Life

We’ve seen how particle physics, operating at the smallest scales of reality, is littered with coincidences that make life possible.

But particle physicists are not alone in mystifying themselves with discoveries of this kind. Cosmologists, studying the largest scales of reality, and the origins of the universe, have found that had the initial conditions not been just right, none of us would be here.

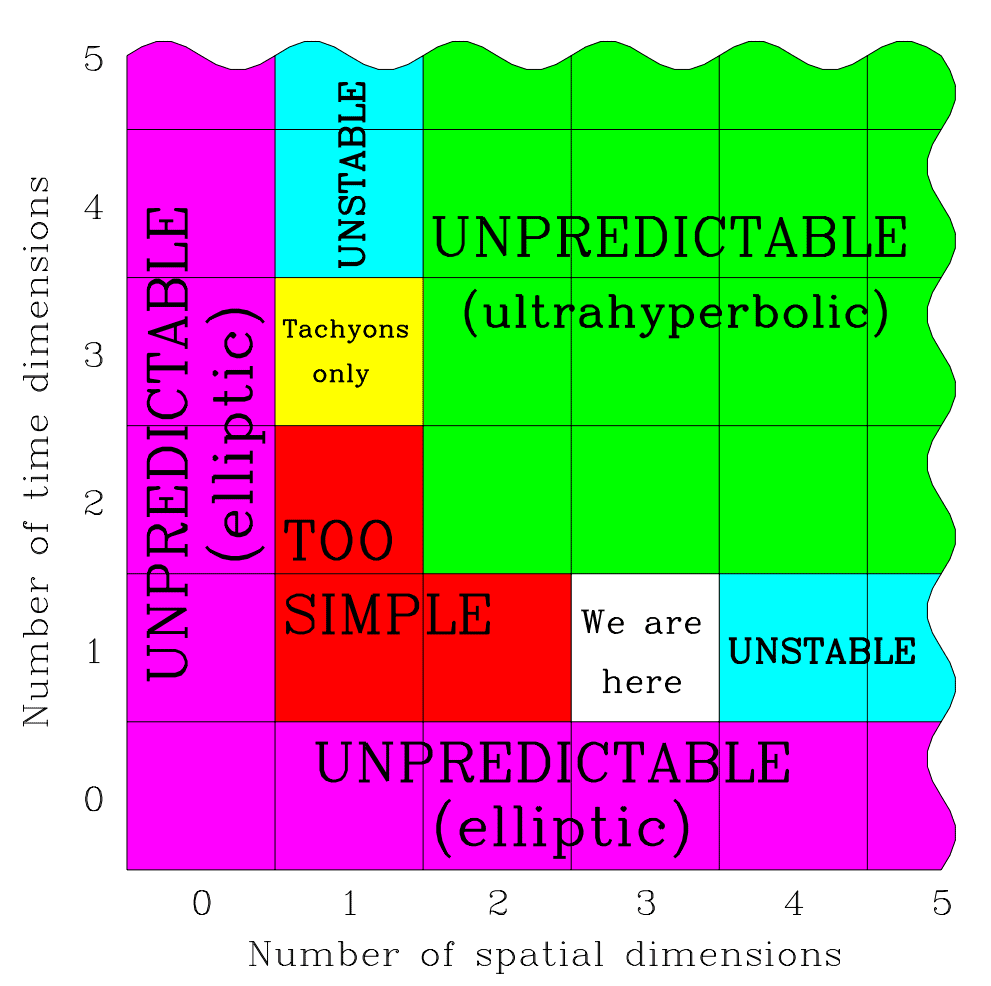

Infinitely Intelligent Babies and Spacetime Dimensionality

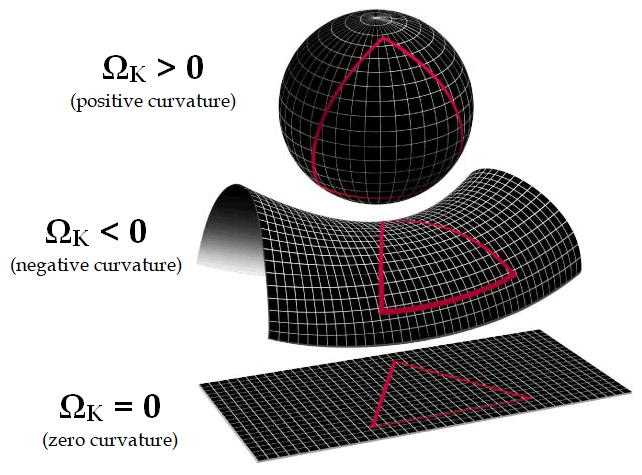

Our universe is marked by having three dimensions of space (height, width, depth) and one dimension of time. Under Einstein’s relativity, space and time merge into a unified whole called spacetime.

Since there are 3 dimensions of space and 1 of time, physicists sometimes refer to this as a 3 + 1 spacetime. (See: “What is time?“)

Even the number and character of dimensions appear significant to life. In a 2-dimensional space like Flatland, topological constraints make certain vital structures difficult or impossible to form.

Difficulties of another kind appear when the number of spatial dimensions increases beyond 3. With 4, rather than 3, dimensions of space, Newton’s inverse square law becomes an inverse cube law.

In space with ≥4 dimensions, orbits are unstable. Planets either crash into their stars or drift away. Electron orbits also become unstable.

In 1997, the cosmologist Max Tegmark was the first to notice and describe the apparent necessity of 3 + 1 spacetime to life.

With more or less than one time dimension, the partial

Max Tegmark in “On the dimensionality of spacetime” (1997)

differential equations of nature would lack the hyperbolicity property that enables observers to make predictions. In a space with more than three dimensions, there can be no traditional atoms and perhaps no stable structures. A space with less than three dimensions allows no gravitational force and may be too simple and barren to contain observers.

This leaves three spatial dimensions and one time dimension as the only viable option. In other words, an infinitely intelligent baby could in principle, before making any observations at all, calculate from first principles that there’s a level II multiverse with different combinations of space and time dimensions, and that 3 + 1 is the only option supporting life. Paraphrasing Descartes, it could then think, Cogito, ergo three space dimensions and one time dimension, before opening its eyes for the first time and verifying its predictions.

Max Tegmark in “Our Mathematical Universe” (2014)

Dark Matter and the Cosmic Web

Cosmologists suspect there exists a particle even more ghostly than the neutrino. While neutrinos can feel both gravity and the weak force, particles of dark matter are thought to only respond to gravity.

There are many theories for what dark matter could be. Examples include axions, sterile neutrinos, and WIMPs. So far, all have eluded direct detection. If dark matter only interacts through gravity, it would be invisible even to such sensitive detectors as the Super-Kamiokande.

For all that is presently known, dark matter particles could be everywhere. Many might be streaming through Earth and our bodies right now. But if they are, we wouldn’t have the slightest clue.

In 1884, Lord Kelvin analyzed the speeds of stars orbiting the galaxy. He found that stars moved so fast that they should fly off — there wasn’t enough gravity from visible matter to keep them in orbit. This led Kelvin to speculate that most matter is in dim or dark stars.

Current models suggest that by mass, dark matter makes up 84.5% of all the matter in the universe — “regular matter” is less common!

Modern detection efforts have mostly ruled out large aggregations of regular matter, (such as black holes, rogue planets, and brown dwarfs), as candidates for this missing mass. This finding has led most physicists to look to a yet undiscovered particle (or family of particles) as responsible.

Dark matter may be invisible, but that doesn’t mean it’s unimportant. On the contrary, dark matter appears necessary to our existence.

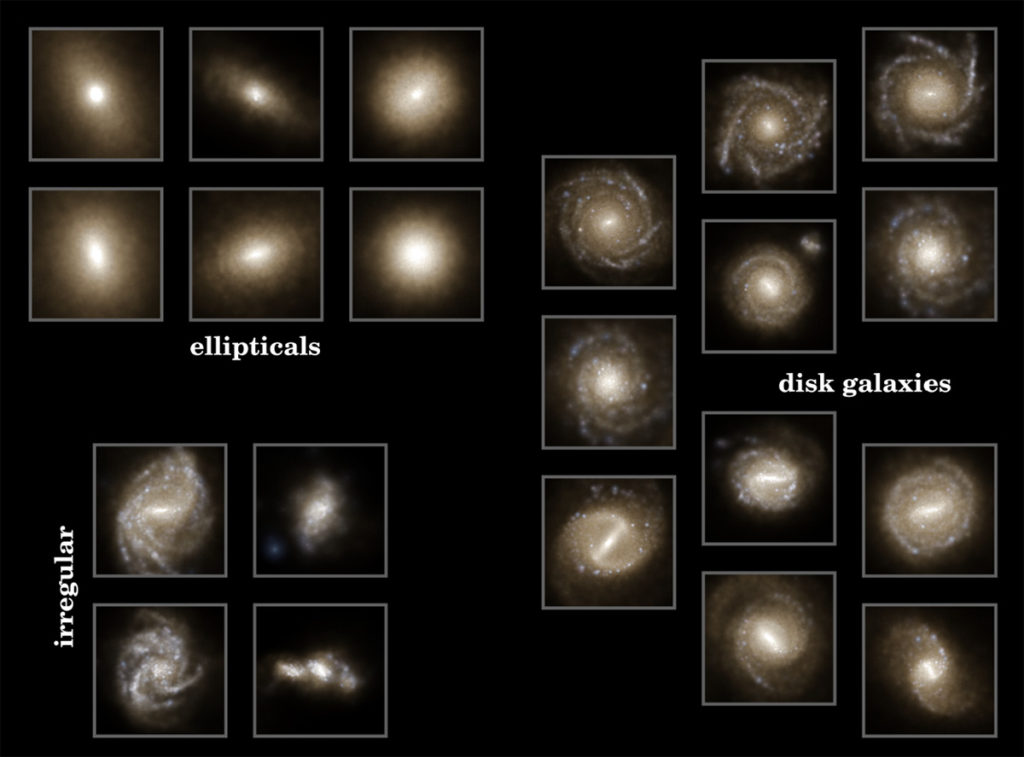

Gravity caused dark matter to coalesce in filaments, or tendrils, forming a great cosmic web. This web is the scaffolding for the structures of the universe. Regular matter, which makes up galaxies and galaxy clusters clumped along the filaments of this web.

I worked on trying to make universes without dark matter in a computer and they were always a disaster. They just never worked. So a universe without dark matter is just a failed universe. It’s a pretty boring barren place. Galaxies just don’t form, and if galaxies don’t form, stars don’t form, and if stars don’t form, presumably people don’t form.

Carlos Frenk in “What We Still Don’t Know: Why Are We Here” (2004)

It was only when we got the right chemistry, you know, the right mix of dark matter and ordinary matter that we suddenly came up with replica universes that for all intents and purposes look just like the real thing.

Without dark matter, and also the right ratio of dark matter to ordinary matter, our universe would be a “boring barren place.”

Universal Homogeneity and Unwelcome Stars

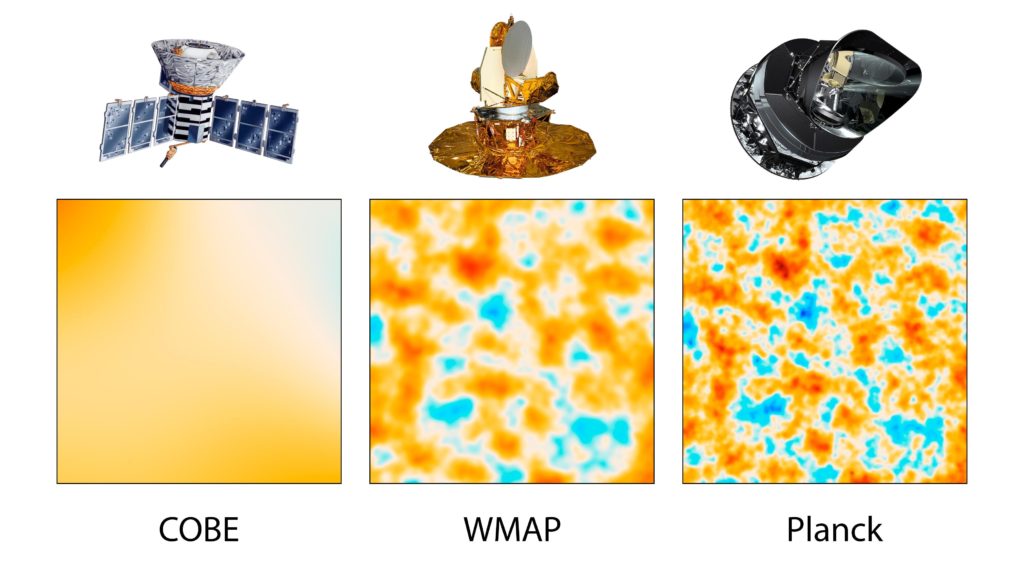

The 1964 detection of microwaves by Arno Penzias and Robert Wilson helped establish the big bang. Terrestrial measurements showed the signal had an equal intensity, coming from every direction in the sky.

But according to cosmological theories, the signal shouldn’t be uniform, there needed to be some density variations, or else gravity wouldn’t have caused any clumping into galaxies at all.

It took satellite-based measurements to give sufficient precision. The measurements painted a picture of the mottled nature of the CMB.

Project leaders behind these satellite measurements, George Smoot and John Mather, received the 2006 Nobel Prize in Physics. In the words of the prize committee, the measurements “marked the inception of cosmology as a precise science.”

The temperature of space across the night’s sky is nearly, but not perfectly, uniform. It varies by approximately two parts in 100,000. This has been termed the homogeneity constant (Q).

Q \approx 0.00002 = 2 \times10^{-5}The variations in temperature are due to density variations in the early universe. Over time, these density differences became magnified through gravity to seed all the large-scale structures we see today: the web of dark matter, galaxy superclusters, and galaxies themselves.

We are very fortunate that Q is two parts in \text{100,000} rather than in \text{10,000} or \text{1,000,000} — in either case, we probably wouldn’t be here.

Q determines the size and densities of galaxies. A reduced Q leads to small and sparsely populated galaxies. Such galaxies wouldn’t have the gravity to keep and recycle the heavy elements bequeathed by previous generations of stars. If Q were much smaller, galaxies wouldn’t form at all.

A larger Q is no better. Had Q been larger, galaxies become so densely populated that stars often pass near each other. These unwelcome guests cause havoc for planets. They can permanently alter planetary orbits — even cause planets to be ejected from their star system.

This would be a disaster for any life developing on such worlds.

If Q were even bigger, say one part in \text{1,000} then there would be no stars or galaxies: only monster black holes that quickly swallow all matter in the universe.

Again there would be no chance for life as we know it.

Fortunately, with Q = 2 \times 10^{-5}, our universe is neither too lumpy, nor too smooth. It’s just right. Stars are close enough to reuse elements from previous generations, but not so close they run into each other.

The Cosmological Constant and Einstein’s Greatest Blunder

The strength of the force that drives the expansion of the universe is determined by a number called the cosmological constant (\Lambda).

\Lambda \approx 2.888 \times 10^{-122} = \newline 0.00000000000000000000000000000000000000000 \newline

000000000000000000000000000000000000000000 \newline

000000000000000000000000000000000000002888\Lambda is incredibly small. It has 120 zeros after the decimal point, and then a two. \Lambda is referred to by many names, such as quintessence, dark energy, vacuum energy, zero-point energy, anti-gravity, and the fifth force.

Regardless of what we call it, all names refer to the same phenomenon: that the universe’s expansion is not slowing but accelerating.

In 1917, in an effort to explain how the universe could be static and eternal (the prevailing belief at the time), without gravitationally collapsing, Einstein introduced \Lambda as a parameter to his equations of general relativity.

The system of equations allows a readily suggested extension which is compatible with the relativity postulate, and is perfectly analogous to the extension of Poisson’s equation. For on the left-hand side of [the equation] we may add the fundamental tensor g_{uv} multiplied by a universal constant, - \Lambda, at present unknown, without destroying the general covariance. […] This field equation, with \Lambda sufficiently small, is in any case also compatible with the facts of experience derived from the solar system.

Albert Einstein in “Cosmological Considerations in the General Theory of Relativity” (1917)

But observations by Vesto Slipher and later by Edwin Hubble and Milton Humason suggested the universe was not static, but dynamic. In 1922, Alexander Friedmann showed that the equations of general relativity could account for and describe an expanding universe.

In a final blow, Arthur Eddington, who ironically proved Einstein right in 1919 by performing the first test of general relativity, proved Einstein’s static cosmological model wrong in 1930. Eddington showed that a static universe is unstable and therefore could not be eternal.

Einstein was quick to change his mind. He said, “New observations by Hubble and Humason concerning the redshift of light in distant nebulae make the presumptions near that the general structure of the universe is not static.” He added, “The redshift of the distant nebulae have smashed my old construction like a hammer blow.”

According to George Gamow, Einstein said, “The introduction of the cosmological term was the biggest blunder he ever made in his life.”

Einstein likely considered it a blunder not for being wrong, but because he missed an opportunity. Had Einstein not tried to prove a static universe and instead looked at what his own equations implied, he might have predicted a dynamic universe before observational results came in. Einstein could have scooped Hubble.

The idea of a cosmological constant was abandoned.

But in 1980, it made a return with the theory of cosmic inflation. Cosmic inflation filled gaps in the big bang. It explained where all the matter and energy came from, why the universe is expanding, and why the density of the universe rests on a knife edge. (See: “What caused the big bang?“)

All inflation needed to get started was for the energy of the vacuum to be non-zero. If vacuum energy is non-zero, space expands on its own, exactly in the way that a cosmological constant predicts.

The repulsive gravity associated with the false vacuum is just what Hubble ordered. It is exactly the kind of force needed to propel the universe into a pattern of motion in which any two particles are moving apart with a velocity proportional to their separation.

Alan Guth in “Eternal inflation and its implications” (2007)

Inflation provided an answer to one fine-tuning question. It answered “Why is the density of the universe so close to the critical density?”

But in doing so, inflation reintroduced \Lambda. And the value of \Lambda highlighted a fine-tuning coincidence so extreme that it’s considered one of the greatest unsolved mysteries in physics.

According to quantum field theory we expect the inherent energy of the vacuum to be 10^{113} joules per cubic meter. A type II supernova, by comparison, is just 10^{46} joules.

But when cosmologists measured the vacuum’s energy, they found it to be pitifully weak: one billionth of a joule per cubic meter. In this case, theory and experiment disagreed by a factor of 10^{122}!

This error is described as, “The worst theoretical prediction in the history of physics.” The question of why this prediction was so bad is called the cosmological constant problem, or the vacuum catastrophe. It remains one of the great unsolved mysteries of physics.

But there is at least one reason why vacuum energy is so low. You probably guessed: had it not been as small as it is, life could not exist.

In 1987, before \Lambda was measured, Steven Weinberg predicted that \Lambda must be nonzero, positive, and smaller than 10^{-120}. Weinberg reasoned that had \Lambda been negative, the universe would have gravitationally collapsed billions of years ago. Had instead \Lambda been slightly larger than it is, say around 10^{-119}, then the universe would expand too quickly for galaxies, stars, or planets to form.

In 1998, two teams of astronomers studying distant supernovae confirmed Weinberg’s prediction. They found that the expansion rate of the universe was not slowing down, but accelerating.

The observed rate of accelerated expansion places \Lambda at 2.888 \times 10^{-122}. This was exactly in the range Steven Weinberg had predicted, 11 years earlier. For their discovery, Saul Perlmutter, Adam Riess and Brian Schmidt received the 2011 Nobel Prize in Physics.

Eighty years after introducing it, Einstein’s cosmological constant was vindicated. The only difference is \Lambda is not at a value that keeps a static universe, but instead is slightly larger, and so it drives an expansion.

But the probability of \Lambda having the value it does is so low that it was inconceivable to physicists. There appears to be no reason it should be so small, aside from the fact that a miniscule \Lambda is necessary for there to be any complex structures or life in this universe.

The fine tunings, how fine-tuned are they? Most of them are 1% sort of things. In other words, if things are 1% different, everything gets bad. And the physicist could say maybe those are just luck. On the other hand, this cosmological constant is tuned to one part in 10^{120} — a hundred and twenty decimal places. Nobody thinks that’s accidental. That is not a reasonable idea — that something is tuned to 120 decimal places just by accident. That’s the most extreme example of fine-tuning.

Leonard Susskind in “What We Still Don’t Know: Are We Real?” (2004)

How Lucky were We?

We’ve seen the near misses, good fortunes, and happy accidents that conspired to make life possible in this universe.

Life requires complex structures. Complex structures require large aggregations of matter and many ways to organize it. This requires a rich chemistry, and in this universe, a rich chemistry requires stars.

Stars need the right kind of particles with the right masses, a balance of the forces and precisely set initial conditions for the universe.

What are the odds everything would work out just right? How likely would it have been to get a life sustaining universe if the fundamental constants of nature were chosen at random?

The Right Particles

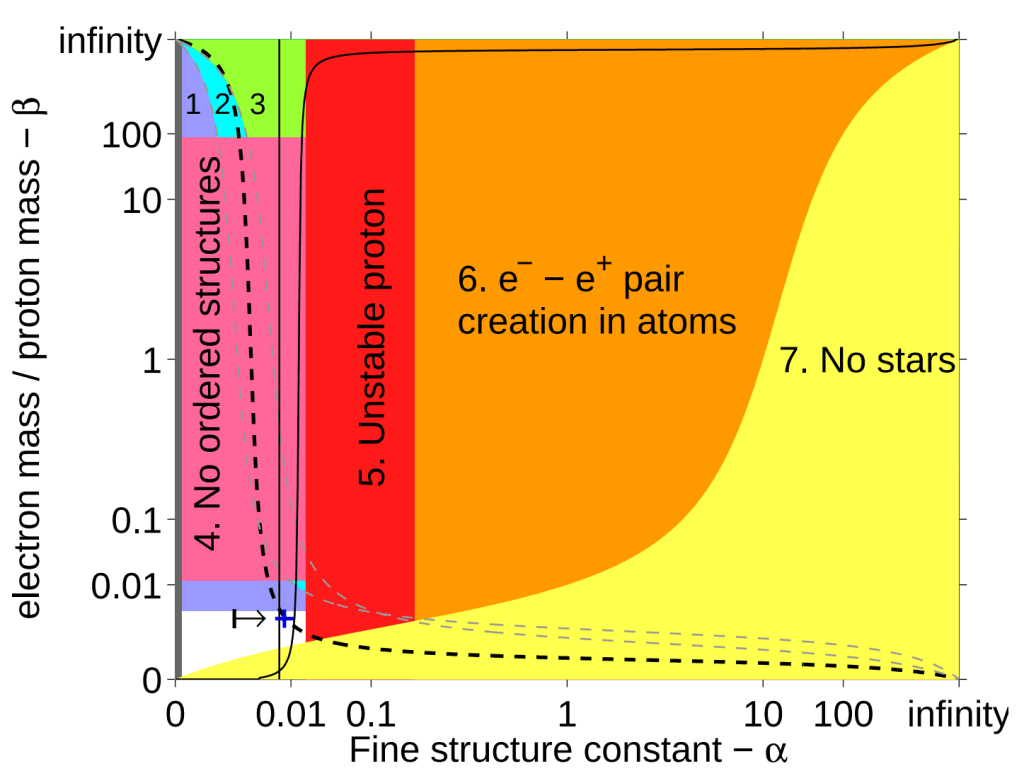

Without the right particles, of the right masses, we don’t have a chemistry. Chemical properties depend on three parameters:

- The proton mass: m_{p}

- The electron:proton mass ratio: \beta = m_{e}/m_{p}

- The fine-structure constant: \alpha

| Constant | Danger Zone | Consequences |

|---|---|---|

| Electron mass (m_{e}) | < 0.5 m_{e} > 2.5 m_{e} | Stars too dim No hydrogen |

| Proton mass (m_{p}) | < 0.999 m_{p} > 1.002 m_{p} | Only hydrogen Only neutrons |

| Photon mass (m_{\gamma}) | > 0 | No atoms |

| Neutrinos (v) | non-existent | No free oxygen |

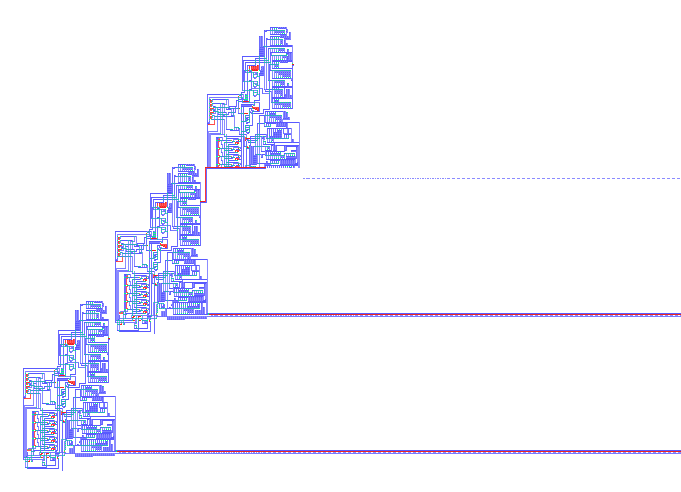

The following graph depicts our island of habitability within the possibility space of two parameters, \beta and \alpha.

\alpha must fall between the two vertical lines. The dashed line shows universes where stars are hot enough to emit light with enough energy to trigger chemical reactions (e.g. photosynthesis). Image Credit: Luke A. Barnes / Max Tegmark" class="wp-image-3241 webpexpress-processed" srcset="https://cdn.alwaysasking.com/wp-content/uploads/2020/10/barnes-particle-masses-forces-chemistry-1024x771.png 1024w, https://cdn.alwaysasking.com/wp-content/uploads/2020/10/barnes-particle-masses-forces-chemistry-300x226.png 300w, https://cdn.alwaysasking.com/wp-content/uploads/2020/10/barnes-particle-masses-forces-chemistry-768x579.png 768w, https://cdn.alwaysasking.com/wp-content/uploads/2020/10/barnes-particle-masses-forces-chemistry.png 1447w" sizes="auto, (max-width: 1024px) 100vw, 1024px">

\alpha must fall between the two vertical lines. The dashed line shows universes where stars are hot enough to emit light with enough energy to trigger chemical reactions (e.g. photosynthesis). Image Credit: Luke A. Barnes / Max Tegmark" class="wp-image-3241 webpexpress-processed" srcset="https://cdn.alwaysasking.com/wp-content/uploads/2020/10/barnes-particle-masses-forces-chemistry-1024x771.png 1024w, https://cdn.alwaysasking.com/wp-content/uploads/2020/10/barnes-particle-masses-forces-chemistry-300x226.png 300w, https://cdn.alwaysasking.com/wp-content/uploads/2020/10/barnes-particle-masses-forces-chemistry-768x579.png 768w, https://cdn.alwaysasking.com/wp-content/uploads/2020/10/barnes-particle-masses-forces-chemistry.png 1447w" sizes="auto, (max-width: 1024px) 100vw, 1024px">Across the range of every possibility, our universe occupies a position on the dashed line and in the unshaded region (marked by +).

Virtually no physical parameters can be changed by large amounts without causing radical qualitative changes to the physical world.

Max Tegmark in “Is ‘the theory of everything’ merely the ultimate ensemble theory?” (1998)

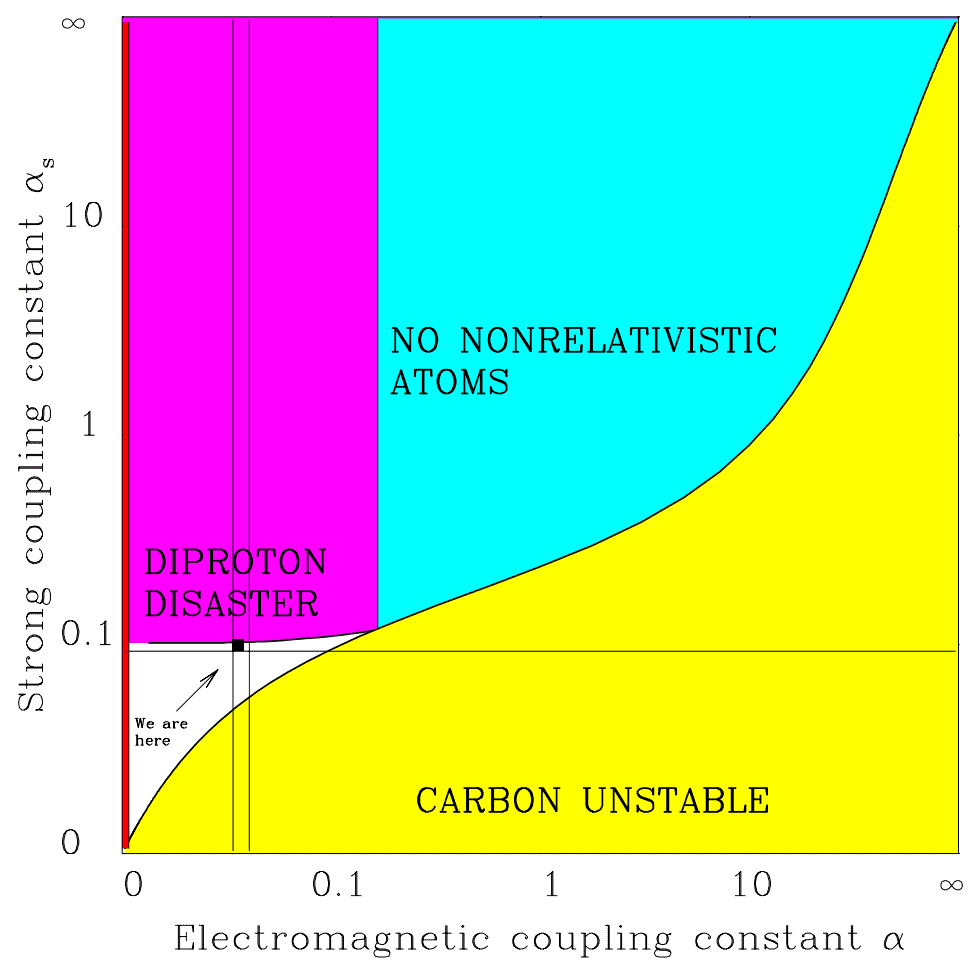

Balanced Forces

To get the right physics, having the right structures at the smallest scales of atomic nuclei, and the largest scales of stars and galaxies, the four forces had to have a finely-tuned balance.

| Constant | Danger Zone | Consequences |

|---|---|---|

| Electromagnetism (\alpha) | < 0.96 \alpha > 1.04 \alpha | Little carbon (No Hoyle state) |

| Gravity (\alpha_{G}) | \leq 0 > 10^{-36} | No structures No long-lived stars |

| Weak Force (\alpha_{w}) | < 0.5 \alpha_{w} > 5 \alpha_{w} | No free oxygen (No rebound) |

| Strong Force (\alpha_{s}) | < 0.9 \alpha_{s} > 1.037 \alpha_{s} | Stars don’t shine No hydrogen |

The following graph depicts our island of habitability within the possibility space of two parameters, \alpha_{s} and \alpha.

\alpha must fall between the two vertical lines. If \alpha_{s} were slightly stronger, we run into the diproton disaster: nuclei of two protons become stable and there would be no hydrogen. Moving to the right, repulsion between protons becomes too strong. As a result, carbon and all heavier elements become unstable. Moving below the horizontal line prevents deuterium from forming, which has a key role in stellar fusion. Stars like our sun would not shine. Image Credit: Max Tegmark" class="wp-image-3076 webpexpress-processed" srcset="https://cdn.alwaysasking.com/wp-content/uploads/2020/10/tegmark-strong-force-coupling-constant.png 974w, https://cdn.alwaysasking.com/wp-content/uploads/2020/10/tegmark-strong-force-coupling-constant-300x297.png 300w, https://cdn.alwaysasking.com/wp-content/uploads/2020/10/tegmark-strong-force-coupling-constant-768x760.png 768w" sizes="auto, (max-width: 974px) 100vw, 974px">

\alpha must fall between the two vertical lines. If \alpha_{s} were slightly stronger, we run into the diproton disaster: nuclei of two protons become stable and there would be no hydrogen. Moving to the right, repulsion between protons becomes too strong. As a result, carbon and all heavier elements become unstable. Moving below the horizontal line prevents deuterium from forming, which has a key role in stellar fusion. Stars like our sun would not shine. Image Credit: Max Tegmark" class="wp-image-3076 webpexpress-processed" srcset="https://cdn.alwaysasking.com/wp-content/uploads/2020/10/tegmark-strong-force-coupling-constant.png 974w, https://cdn.alwaysasking.com/wp-content/uploads/2020/10/tegmark-strong-force-coupling-constant-300x297.png 300w, https://cdn.alwaysasking.com/wp-content/uploads/2020/10/tegmark-strong-force-coupling-constant-768x760.png 768w" sizes="auto, (max-width: 974px) 100vw, 974px">Across the range of possibilities, our universe sits squished between all these bounds, occupying a spot marked by the black square.

If any one of the numbers were different even to the tiniest degree, there would be no stars, no complex elements, no life.

Martin Rees

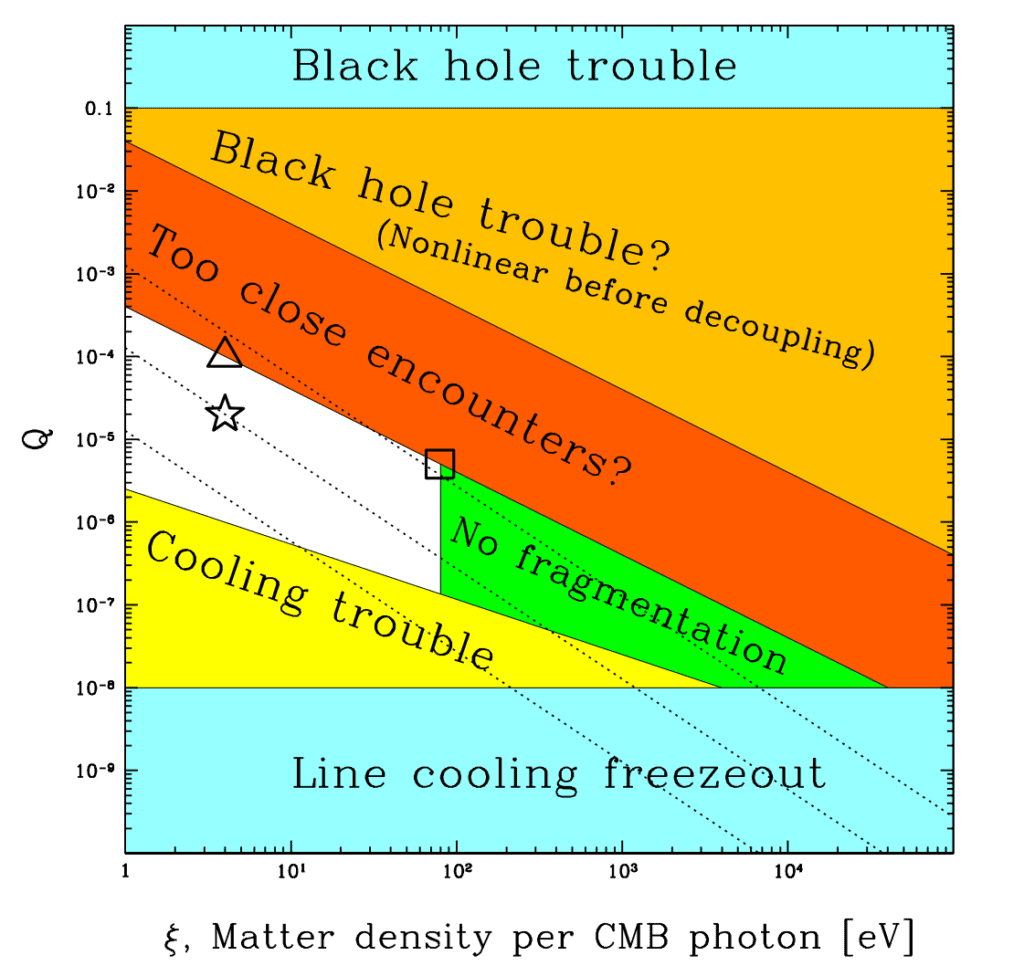

Precise Initial Conditions

A few cosmological parameters have no impact on biology or chemistry. We can therefore consider them independently tuned from other constants of nature like particle masses and force strengths.

These independent cosmological parameters are:

- The density of dark matter: \zeta_{c}

- The homogeneity constant: Q

- The cosmological constant: \Lambda

These parameters drive the formation of galaxies, stars, and planets.

| Constant | Danger Zone | Consequences |

|---|---|---|

| Spatial dimensionality (S) | < 3 > 3 | Too simple No stable orbits |

| Dark matter density (\zeta_{c}) | < 0.5 \zeta_{c} > 20 \zeta_{c} | No galaxies No stars |

| Homogeneity constant (Q) | < 10^{-6} > 10^{-4} | No structures Stellar intruders |

| Cosmological constant (\Lambda) | < -10^{-121} > 10^{-120} | Universe collapses No structures |

The following graph depicts our island of habitability within the possibility space of two parameters, Q and total matter density \zeta.

Q were slightly greater, galaxies would be too dense and dangerous to life-bearing planets. If Q were much less, galaxies wouldn't be able to condense out of intergalactic gas. Moving to the right, if the dark matter density is too high, stars can't fragment out of the gas within galaxies. Image Credit: Max Tegmark, Anthony Aguirre, Martin Rees, Frank Wilczek / Dimensionless constants, cosmology and other dark matters" class="wp-image-3230 webpexpress-processed" srcset="https://cdn.alwaysasking.com/wp-content/uploads/2020/10/tegmark-matter-density-q-1024x966.png 1024w, https://cdn.alwaysasking.com/wp-content/uploads/2020/10/tegmark-matter-density-q-300x283.png 300w, https://cdn.alwaysasking.com/wp-content/uploads/2020/10/tegmark-matter-density-q-768x725.png 768w, https://cdn.alwaysasking.com/wp-content/uploads/2020/10/tegmark-matter-density-q.png 1121w" sizes="auto, (max-width: 1024px) 100vw, 1024px">

Q were slightly greater, galaxies would be too dense and dangerous to life-bearing planets. If Q were much less, galaxies wouldn't be able to condense out of intergalactic gas. Moving to the right, if the dark matter density is too high, stars can't fragment out of the gas within galaxies. Image Credit: Max Tegmark, Anthony Aguirre, Martin Rees, Frank Wilczek / Dimensionless constants, cosmology and other dark matters" class="wp-image-3230 webpexpress-processed" srcset="https://cdn.alwaysasking.com/wp-content/uploads/2020/10/tegmark-matter-density-q-1024x966.png 1024w, https://cdn.alwaysasking.com/wp-content/uploads/2020/10/tegmark-matter-density-q-300x283.png 300w, https://cdn.alwaysasking.com/wp-content/uploads/2020/10/tegmark-matter-density-q-768x725.png 768w, https://cdn.alwaysasking.com/wp-content/uploads/2020/10/tegmark-matter-density-q.png 1121w" sizes="auto, (max-width: 1024px) 100vw, 1024px">Across the range of all possibilities, our universe sits comfortably between these two extremes, occupying the spot marked by the star.

It seems clear that there are relatively few ranges of values for the numbers that would allow for development of any form of intelligent life.

Stephen Hawking

Between Order and Chaos

Life, or more generally, complexity, walks a narrow line between a suffocating simplicity and an untamable chaos.

On the side of simplicity, we have universes without structure — a universe that’s nothing but a diffuse gas, devoid of galaxies, stars, and planets. There are universes lacking heavy elements, chemical bonding, or atoms capable of linking into large and complex biomolecules.

On the side of chaos, we have universes lacking stability — stars that live fast and die young, galaxies swarmed by black holes, and planets plagued by passing stars, asteroid bombardment, and nearby gamma ray bursts and supernovae. There are universes where chemical bonds form and break too easily, and where all sunlight is ionizing.

Fortunately for us, ours is a universe that occupies a happy medium between simplicity and chaos. Here interesting things happen, but things are stable enough to reliably encode and copy information — a universal requirement for life, or anything that reproduces.

To some of us, it looks like we have to live with the idea that the constants of nature, the laws of nature, everything that we know about, somehow, was influenced by our own existence.

Leonard Susskind in “What We Still Don’t Know: Are We Real?” (2004)

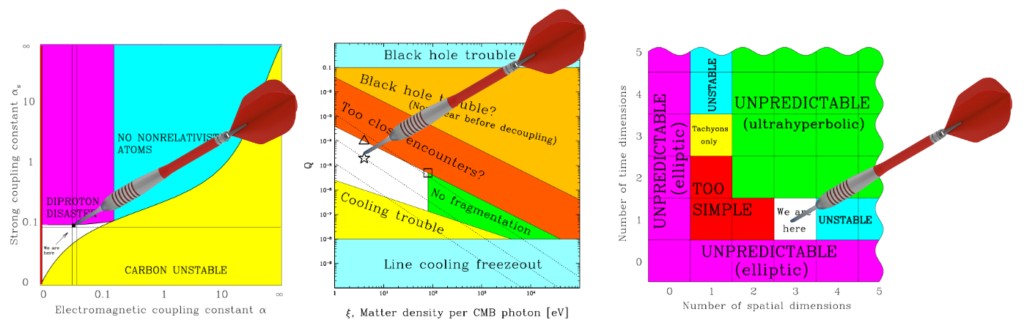

Counting our Luck

How lucky should we count ourselves? Are the odds of a life-supporting universe modestly small, like one in ten, or incredibly small like one in a hundred billion?

One way to estimate this is to consider the possibility space for each of the fundamental constants of nature, and then look to see what fraction of that range is compatible with life.

For life to be possible required not just one coincidence, but many. Each of the constants had to fall in a life-compatible range. Had any one been off, it would have sterilized the universe.

If there are 12 fundamental constants important to life, then the fraction of life-supporting universes, having our laws of physics, could be calculated as the volume of the 12-dimensional space compatible with life divided by the total 12-dimensional space of possible values.

But 12-dimensional spaces are hard to imagine, and even more difficult to depict on paper. Alternatively, we can depict this as six 2-dimensional areas, and consider just two constants at a time.

For example, we might take any two constants: the weak force vs. the strong force, space dimensions vs. time dimensions, electron mass vs. proton mass, and so on, and graph six areas. Each area forms a dart board whose bull’s eye is the life-friendly range.

If the constants of nature were chosen randomly, then getting a life-friendly universe is equivalent to throwing a dart at a random spot at each of the six areas and having it hit the bulls eye all six times.

If the average area of the bull’s eyes is 10% of the total, then the odds of hitting it six times in a row for a random throw is one in a million. If the area of the bull’s eye is 1% of the board, the odds fall to one in a trillion.

For a typical dart board, the area of the bull’s eye is just 1/1296th of the board. The odds of hitting six bull’s eyes in six random throws are 1 in 4.7 million trillion — so low as to have never happened in the history of darts.

But how big are the bull’s eyes in the case of fundamental constants? How big are the boards? Knowing both is necessary to compute the exact odds of a life-friendly universe.

In the graphs of life-friendly regions the life-compatible areas represent a narrow fraction of the possibility space. This suggests that across the range of all possible values, combinations supporting life are few and far between.

Of course, there might be other forms of intelligent life, not dreamed of even by writers of science fiction, that did not require the light of a star like the sun or the heavier chemical elements that are made in stars and are flung back into space when the stars explode. Nevertheless, it seems clear that there are relatively few ranges of values for the numbers that would allow the development of any form of intelligent life.

Stephen Hawking in “A Brief History of Time” (1988)

But calculating the exact probability of life is complicated. In most cases, science lacks an understanding of the possible ranges different constants might take and how likely different values are. This makes quantifying the exact likelihood of life difficult.

But there is one constant whose range and likelihood are both known. Further, it can be considered independently from the other constants. It plays no role in determining nuclear physics, chemistry or biology.

The Finest Tuning

In the dart boards of the fundamental constants, some bull’s eyes are smaller than others. We might say these parameters are “more finely-tuned” — greater precision was required to set those parameters.

Among all the known fundamental constants, one of them is the most finely-tuned of all. This finest tuning is \Lambda.

The cosmological constant, \Lambda, is understood to be the inherent energy of the vacuum. According to quantum field theory, the vacuum is a collection of overlapping particle fields, with one field for each kind of particle. Particles then, can be understood as vibrations in their respective field.

Each of the few dozen particle fields contributes some amount of energy, which can be positive or negative, towards the energy of the vacuum.

What’s remarkable is that when all of these independent field energies are summed, they cancel out to 120 decimal places:

\Lambda \approx 0.0000000000000000000000000000000000000 \newline 00000000000000000000000000000000000000000000 \newline 00000000000000000000000000000000000000002888

For there to be any structures, complexity, or life in this universe, it was necessary for \Lambda to be so small. But how likely was it?

Their smallness with respect to the [Planck scale] is not understood and is considered as ‘unnatural’ in relativistic quantum field theory, because it seems to require precise cancellations among much larger contributions. If these cancellations happen for no fundamental reason, they are ‘unlikely’, in the sense that summing random order one numbers gives 10^{−120} with a ‘probability’ of about 10^{−120}.

Authors of “Direct anthropic bound on the weak scale from supernovae explosions” (2019)

To get a feeling for how unlikely such an occurrence is, imagine we had an infinitely-sided die. That is, rather than a standard six-sided die we had one with infinitely many sides: a sphere.

On this die, we can denote each of the infinite values existing between -1 and +1. We might do this by having black denote -1, white denote +1, and every shade of gray between them marking the continuous range.

Having the magnitude of the vacuum energy be less than 10^{−120} is equivalent to rolling this spherical die a few dozen times, and after adding the numbers finding the magnitude of the result is less than one in 10^{120}.

This is extraordinarily unlikely.

To put it in context, consider that the odds of winning a national lottery are about one in a hundred million — or one in 10^{8}.

One in 10^{120} represents equivalent odds to winning a national lottery 15 times in a row. We definitely are winners in a cosmic lottery!

Could it all be a coincidence?

At a certain point, luck becomes implausible as an explanation. If the same person won a national lottery 15 times in a row, we would look to other answers besides mere luck to explain it.

Perhaps someone rigged the game. Or perhaps the person used their winnings to continue buying up all possible tickets.

It is a mystery that demands an explanation.

The extraordinary odds we overcame to win the right to exist seem to be telling us something important about existence and reality.

But what?

Why the Universe is Made for Life

Sir Martin Rees is professor of cosmology and astrophysics at the University of Cambridge, Master of Trinity College, former President of the Royal Society and the current Astronomer Royal.

Rees has spent much of his life on the question of why the universe is suited for life. He authored one of the first papers on the subject, wrote a book on it, and even hosted a television show exploring the topic.

In his book, “Just Six Numbers”, Rees describes three known answers to the question of why the universe is finely-tuned for life:

- Coincidence

- We’re just incredibly lucky and there is no explanation or reason.

- Providence

- Our universe was designed, chosen, or created to allow life.

- Multiverse

- There are many universes, most are barren, but some permit life.

Let’s consider the implications for each of these answers.

Coincidence

However small it may be, there is a chance that there is a single universe, neither designed, nor one of many, which just happens to have physical laws and constants that are life-permitting.

Perhaps, things are not so hopeless for life as we estimated. It might be that “life finds a way” in a large fraction of possible universes.

If so, then we shouldn’t be so surprised.

But there are reasons to doubt this. Life requires a special environment. Life must be capable of maintaining, repairing, copying, and mutating its information patterns. The environment must allow life to arise and self-assemble and also self-replicate.

Across possible environments, few appear to support both needs. Most seem to miss the necessary balance between simplicity and chaos.

If the environment is too simple, there’s no hope of getting self-arising, self-replicating forms. If the environment is too chaotic, there’s no hope of preserving information across generations.

John von Neumann created the first self-replicating machine. It was designed to operate within a cellular automaton, a specially crafted environment having its own set of rules — i.e. its own “laws of physics.”

But in the set of possible cellular automata, only a small fraction has the right balance of complexity and stability to support self-replication.

It seems plausible that, even in the space of cellular automata, the set of laws that permit the emergence and persistence of complexity is a very small subset of all possible laws. […] The point is that, however many ways there are of being interesting, there are vastly many more ways of being trivially simple or utterly chaotic.

Luke A. Barnes in “The Fine-Tuning of the Universe for Intelligent Life” (2011)

As Richard Dawkins put it, “however many ways there may be of being alive, it is certain that there are vastly more ways of being dead.”

Even in a life-friendly universe like ours, we find ourselves in a very special place. Most of the universe is intergalactic space. A cold, dark, void — having just one hydrogen atom per cubic meter.

Our environment is some 10^{30} times denser than average. As Max Tegmark noted, “only a thousandth of a trillionth of a trillionth of a trillionth of our Universe lies within a kilometer of a planetary surface.” It’s doubtful life could arise in the near vacuum of intergalactic space, nor is it likely to exist in the hot cores of planets or stars.

Most of our universe is inhospitable. Life can’t always find a way.

The rarity of life in the universe speaks to how uncommon life may be across the set of possible universes. Even where life is known to be possible it appears to be exceedingly rare. (See: “Are we alone?“)

The sensitivity of life to the smallest changes in the fundamental constants further suggests it takes a rare mix of particles, forces, and initial conditions working together to make a universe with life.

It is logically possible that parameters determined uniquely by abstract theoretical principles just happen to exhibit all the apparent fine-tunings required to produce, by a lucky coincidence, a universe containing complex structures. But that, I think, really strains credulity.

Frank Wilczek in “Physics Today” (2006)

But if incredible luck is not the answer, then someone, or something, must have set things up just right.

Providence

Divine providence is another possible answer to the mystery. This is the belief that the universe was designed and created with intention. Perhaps to be interesting, to support the existence of intelligent life.

Fred Hoyle, who had been a lifelong atheist, was led by his discovery of the carbon-12 excited state to believe in a “super-calculating intellect” who must have designed the properties of the carbon atom.

In this, he is not alone.

Arno Penzias, who discovered the cosmic hum of the big bang, said “Astronomy leads us to a unique event, a universe which was created out of nothing, one with the very delicate balance needed to provide exactly the conditions required to permit life, and one which has an underlying (one might say ‘supernatural’) plan.”

A life-giving factor lies at the centre of the whole machinery and design of the world.

John Archibald Wheeler in forward to “The Anthropic Cosmological Principle” (1986)

If there is one universe, with one set of laws, divine providence is a natural conclusion. So many bullets were dodged in the many fine-tunings that it all being a coincidence doesn’t hold water.

While some physicists were open to this possibility, most were unsettled by it. Fine-tunings suggest a fine-tuner. But once a deity is invoked as part of an explanation, further scientific progress halts — as any line of inquiry can be answered with, “it was God’s plan.”

This is a dislike of mixing religion into physics. I think they were somewhat afraid that if it was admitted that the reason the world is the way it is has to do with our own existence, that that could be hijacked by the creationists — by the intelligent designers. And of course what they would say is “Yes, we always told you so. There is a benevolent somebody way up high in the universe who created the universe exactly so that we could live.” I think physicists shrank at the idea of getting involved in such things.

Leonard Susskind in “What We Still Don’t Know: Are We Real?” (2004)

But the claim that creation or a creator is unscientific is now in doubt. Today’s scientists have already wet their toes in the area of creating universes. They do so in highly detailed computer simulations.

The cosmologist Alan Guth even thinks it is possible in principle to create and split off an entire new physical universe in the laboratory.

The odd thing is that you might even be able to start a new universe using energy equivalent to just a few pounds of matter. Provided you could find some way to compress it to a density of about 10 to the 75th power grams per cubic centimeter, and provided you could trigger the thing, inflation would do the rest.